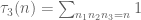

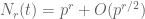

As in previous posts, we use the following asymptotic notation: is a parameter going off to infinity, and all quantities may depend on

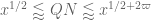

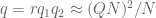

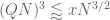

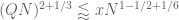

unless explicitly declared to be “fixed”. The asymptotic notation

is then defined relative to this parameter. A quantity

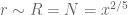

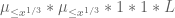

is said to be of polynomial size if one has

, and bounded if

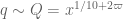

. We also write

for

, and

for

.

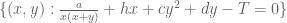

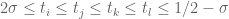

The purpose of this post is to collect together all the various refinements to the second half of Zhang’s paper that have been obtained as part of the polymath8 project and present them as a coherent argument. In order to state the main result, we need to recall some definitions. If is a bounded subset of

, let

denote the square-free numbers whose prime factors lie in

, and let

denote the product of the primes

in

. Note by the Chinese remainder theorem that the set

of primitive congruence classes

modulo

can be identified with the tuples

of primitive congruence classes

of congruence classes modulo

for each

which obey the Chinese remainder theorem

for all coprime , since one can identify

with the tuple

for each

.

If and

is a natural number, we say that

is

-densely divisible if, for every

, one can find a factor of

in the interval

. We say that

is doubly

-densely divisible if, for every

, one can find a factor

of

in the interval

such that

is itself

-densely divisible. We let

denote the set of doubly

-densely divisible natural numbers, and

the set of

-densely divisible numbers.

Given any finitely supported sequence and any primitive residue class

, we define the discrepancy

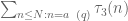

For any fixed , we let

denote the assertion that

for any fixed , any bounded

, and any primitive

, where

is the von Mangoldt function. Importantly, we do not require

or

to be fixed, in particular

could grow polynomially in

, and

could grow exponentially in

, but the implied constant in (1) would still need to be fixed (so it has to be uniform in

and

). (In previous formulations of these estimates, the system of congruence

was also required to obey a controlled multiplicity hypothesis, but we no longer need this hypothesis in our arguments.) In this post we will record the proof of the following result, which is currently the best distribution result produced by the ongoing polymath8 project to optimise Zhang’s theorem on bounded gaps between primes:

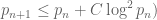

This improves upon the previous constraint of (see this previous post), although that latter statement was stronger in that it only required single dense divisibility rather than double dense divisibility. However, thanks to the efficiency of the sieving step of our argument, the upgrade of the single dense divisibility hypothesis to double dense divisibility costs almost nothing with respect to the

parameter (which, using this constraint, gives a value of

as verified in these comments, which then implies a value of

).

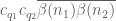

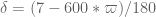

This estimate is deduced from three sub-estimates, which require a bit more notation to state. We need a fixed quantity .

Definition 2 A coefficient sequence is a finitely supported sequence

that obeys the bounds

for all

, where

is the divisor function.

- (i) A coefficient sequence

is said to be at scale

for some

if it is supported on an interval of the form

.

- (ii) A coefficient sequence

at scale

is said to obey the Siegel-Walfisz theorem if one has

for any

, any fixed

, and any primitive residue class

.

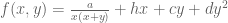

- (iii) A coefficient sequence

at scale

(relative to this choice of

) is said to be smooth if it takes the form

for some smooth function

supported on

obeying the derivative bounds

for all fixed

(note that the implied constant in the

notation may depend on

).

Definition 3 (Type I, Type II, Type III estimates) Let

,

, and

be fixed quantities. We let

be an arbitrary bounded subset of

, and

a primitive congruence class.

- (i) We say that

holds if, whenever

are quantities with

and

for some fixed

, and

are coefficient sequences at scales

respectively, with

obeying a Siegel-Walfisz theorem, we have

- (ii) We say that

holds if the conclusion (7) of

holds under the same hypotheses as before, except that (6) is replaced with

for some sufficiently small fixed

.

- (iii) We say that

holds if, whenever

are quantities with

and

are coefficient sequences at scales

respectively, with

smooth, we have

Theorem 1 is then a consequence of the following four statements.

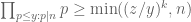

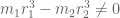

Theorem 4 (Type I estimate)

holds whenever

are fixed quantities such that

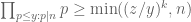

Theorem 5 (Type II estimate)

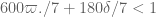

holds whenever

are fixed quantities such that

Theorem 6 (Type III estimate)

holds whenever

,

, and

are fixed quantities such that

In particular, if

then all values of

that are sufficiently close to

are admissible.

Lemma 7 (Combinatorial lemma) Let

,

, and

be such that

,

, and

simultaneously hold. Then

holds.

Indeed, if , one checks that the hypotheses for Theorems 4, 5, 6 are obeyed for

sufficiently close to

, at which point the claim follows from Lemma 7.

The proofs of Theorems 4, 5, 6 will be given below the fold, while the proof of Lemma 7 follows from the arguments in this previous post. We remark that in our current arguments, the double dense divisibility is only fully used in the Type I estimates; the Type II and Type III estimates are also valid just with single dense divisibility.

Remark 1 Theorem 6 is vacuously true for

, as the condition (10) cannot be satisfied in this case. If we use this trivial case of Theorem 6, while keeping the full strength of Theorems 4 and 5, we obtain Theorem 1 in the regime

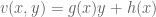

— 1. Exponential sum estimates —

It will be convenient to introduce a little bit of formal algebraic notation. Define an integral rational function to be a formal rational function in a formal indeterminate

where

are polynomials with integer coefficients, and

is monic; in particular any polynomial

can be identified with the integral rational function

. For minor technical reasons we do not equate integral rational functions under cancelling, thus for instance we consider

to be distinct from

; we need to do this because the domain of definition of these two functions is a little different (the former is not defined when

, but the latter can still be defined here). Because we refuse to cancel, we have to be a little careful how we define algebraic operations: specifically, we define

Note that the denominator always remains monic with respect to these operations. This is not quite a ring with a derivation (the subtraction operation does not quite cancel the addition operation due to the inability to cancel) but this will not bother us in practice. (On the other hand, addition and multiplication remain associative, and the latter continues to distribute over the former, and differentiation obeys the usual sum and product rules.) Note that if is an integral rational function, we can localise it modulo

for any modulus

to obtain a rational function

that is the ratio of two polynomials

in

, with the denominator monic and hence non-vanishing. We can define the algebraic operations of addition, subtraction, multiplication, and differentiation on integral rational functions modulo

by the same formulae as above, and we observe that these operations are completely compatible with their counterparts over

(even without the ability to cancel), thus for instance

. We say that

is divisible by

, and write

, if the numerator

of

has all coefficients divisible by

.

If is an integral rational function and

, then

is well defined as an element of

except when

is a zero divisor in

. We adopt the convention that

when

is a zero divisor in

, thus

is really shorthand for

; by abuse of notation we view

both as a function on

and as a

-periodic function on

. Thus for instance

Note that if , then

for all

for which

is well defined. We define

to be the largest factor

of

for which

; in particular, if

is square-free, we have

Note with these conventions that .

We recall the following Chinese remainder theorem:

Lemma 8 (Chinese remainder theorem) Let

with

coprime positive integers. If

is a rational function, then

for all integers

, where

is the inverse of

in

and similarly for

.

When there is no chance of confusion we will write for

(though note that

does not qualify as an integral rational function since the constant

is not monic).

Proof: See Lemma 7 of this previous post.

Now we give an estimate for complete exponential sums, which combines both Ramanujan sum bounds with Weil conjecture bounds.

Proposition 9 (Ramanujan-Weil bounds) Let

be a positive square-free integer of polynomial size, and let

be an integral rational function with

of bounded degree. Then we have

Proof: See Proposition 4 of this previous post.

Proposition 10 (Incomplete sums) Let

be a positive square-free integer of polynomial size, and let

be an integral rational function with

of bounded degree with

. Let

be a fixed integer, and suppose that we have a factorisation

. Then for any

, and any coefficient sequence

at scale

of polynomial size, one has

where

and

.

Proof: Let be a sufficiently large fixed quantity depending on the degrees of

and

. We first make the technical reduction that it suffices to establish the claim in the case when

has no prime factors less than

, for otherwise one can factor

where

is the product of all the prime factors of

less than

, and by splitting the

summation into residue classes

and performing the substitution

and applying the proposition with

replaced by

(and adjusting

accordingly) we obtain the claim.

By dividing through by

(and replacing

with

) we may assume without loss of generality that

and

. As

vanishes at infinity, this implies that

and

(see Lemma 3 from this previous post).

We induct on . We begin with the base case

, where the task is to show that

By Proposition 9, we have

so we may delete the condition on the right-hand side without penalty.

By completion of sums (see Lemma 6 of this previous post), the left-hand side is

so it will suffice to show that

By Proposition 9 again, the left-hand side is

Since , we see that

, and the claim follows.

Now suppose that , and that the claim has already been proven for

. We use the

-van der Corput

-process of Heath-Brown and Graham-Ringrose. If we have

, then

and the claim then follows by the induction hypothesis (concatenating and

). Similarly, if

, then

, and the claim follows from the triangle inequality. Thus we may assume that

Let . We can rewrite

as

By Lemma 8 we have , and so by the triangle inequality and the Cauchy-Schwarz inequality

since the summand is only non-zero when is supported on an interval of length

. This last expression may be rearranged as

The diagonal contribution can be estimated by

, which is acceptable, so it suffices to show that

We observe that is an integral rational function whose numerator has lower degree than the denominator. If a prime

dividing

also divides this rational function, then

is divisible by

; if

is not divisible by

, this implies by telescoping series that

for all

. This implies that

is constant where it is defined; as

vanishes at infinity and is defined outside of

elements, this implies that

(here we use the fact that

must exceed

, since it divides

). We conclude that

Applying the induction hypothesis and Proposition 9, we may thus bound

by

The contribution of the first two terms to (14) is acceptable, so the only contribution remaining to control is

If we bound by

, we can bound this expression by

which by Lemma 5 of this previous post and the bound is bounded by

which is acceptable.

We record a special case of the above proposition:

Corollary 11 Let

be square-free numbers (not necessarily coprime) of polynomial size, let

be integers, let

, and let

be a coefficient sequence at scale

. Suppose that

is

-densely divisible. Let

be a residue class with

. Then

where

for

. We also have the variant bound

Proof: We first consider the case , so that the congruence condition

can be deleted. By the dense divisibility hypothesis we may factor

for some

and

The first bound then follows from the case of Proposition 10, combined with the bound

that is proven as part of Proposition 5 of this previous post. The second bound similarly follows from the case of Proposition10.

Now we consider the case when . Writing

,

, and

, we have from Lemma 8 that

and similarly

If we then apply the previous results with replaced by

(with

being

-densely divisible) and

replaced by

(and with suitable alterations to

), we obtain the required claims.

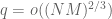

For the Type III estimate we will also need a deeper exponential sum estimate, involving the hyper-Kloosterman sums

for square-free and

.)

Lemma 12 (Correlation of hyper-Kloosterman sums) Let

be square-free numbers of polynomial size with

. Let

,

, and

. Then

where

.

Proof: From Lemma 8 we have

and so it suffices to prove the estimates

and

By further application of Lemma 8, together with the divisor bound, it suffices to show that

whenever ,

, and

.

Suppose first that , so that

. Then

, and the left-hand side simplifies to

which can be expanded as

Performing the Fourier summation in , this can be bounded by the sum of

and

The first term either vanishes or is a Kloosterman sum, and is in either case, while the second term can be calculated by Fourier series to be

, and the claim follows. Similarly if

.

Now suppose that and

. Then we use the Deligne bound

to obtain the desired claim. The only remaining case is when

and

or

, so our task is to show that

in this case. A proof of this claim (which uses the full strength of Deligne’s work) can be found in this paper of Michel (see also Proposition 6 of this recent expository note of Fouvry, Kowalski, and Michel).

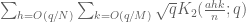

From this and completion and sums we have

Lemma 13 (Correlation of hyper-Kloosterman sums, II) Let

be square-free numbers of polynomial size with

. Let

,

. Let

be a smooth function adapted to the interval

which equals one on

. Then

where

, and the sum runs over those

coprime to

.

— 2. Type I estimate —

We begin the proof of Theorem 4, closely following the arguments from Section 5 of this previous post. Let be as in the theorem. We can restrict

to the range

for some sufficiently slowly decaying , since otherwise we may use the Bombieri-Vinogradov theorem (Theorem 4 from this previous post). Thus, by dyadic decomposition, we need to show that

for any fixed and for any

in the range

be a sufficiently small fixed exponent.

By Lemma 11 of this previous post, we know that for all in

outside of a small number of exceptions, we have

Specifically, the number of exceptions in the interval is

for any fixed

. The contribution of the exceptional

can be shown to be acceptable by Cauchy-Schwarz and trivial estimates (see Section 5 of this previous post), so we restrict attention to those

for which (18) holds. In particular, as

is restricted to be doubly

-densely divisible we may factor

with coprime and square-free, with

-densely divisible with

, and

and

Here we use the easily verified fact that . Since

is

-densely divisible, we also have

.

By dyadic decomposition, it thus sufices to show that

for any fixed , where

obey the size conditions

Fix . We abbreviate

and

by

and

respectively, thus our task is to show that

We now split the discrepancy

as the sum of the subdiscrepancies

and

In Section 5 of this previous post, it was established (using the Bombieri-Vinogradov theorem) that

As in the previous notes, we will not take advantage of the summation, and use crude estimates to reduce to showing that

for each individual with

, which we now select. It will suffice to prove the slightly stronger statement

for all coprime to

, since if one then specialises to the case when

and averages over all primitive

we obtain (23) from the triangle inequality.

We use the dispersion method. We write the left-hand side of (24) as

for some bounded sequence (which may also depend on

, but we suppress this dependence). This expression may be rearranged as

so from the Cauchy-Schwarz inequality and crude estimates it suffices to show that

for any fixed , where

is a smooth coefficient sequence at scale

. Expanding out the square, it suffices to show that

where is subject to the same constraints as

(thus

and

for

), and

is some quantity that is independent of

.

Observe that must be coprime to

and

coprime to

, with

, to have a non-zero contribution to (26). We then rearrange the left-hand side as

note that these inverses in the various rings ,

,

are well-defined thanks to the coprimality hypotheses.

We may write for some

. By the triangle inequality, and relabeling

as

, it thus suffices to show that for any particular

for some independent of

,

.

At this stage in previous posts we isolated the coprime case as the dominant case, using a controlled multiplicity hypothesis to deal with the non-coprime case. Here, we will carry the non-coprime case with us for a little longer so as not to rely on a controlled multiplicity hypothesis; this introduces some additional factors of

into the analysis but they should be ignored on a first reading.

Applying completion of sums (Section 2 from this previous post), we can express the left-hand side of (28) as a main term

where .

Let us first deal with the main term (29). The contribution of the coprime case does not depend on

and can thus be absorbed into the

term. Now we consider the contribution of the non-coprime case when

. We may estimate the contribution of this case by

We may estimate by

. We just estimate the contribution of

, as the other case is treated similarly (after shifting

by

). We rearrange this contribution as

The summation is

. Evaluating the

and

summations, we obtain a bound of

Since and

, we have

, and so we may evaluate the

summation as

By (20) and (19), this is as required.

It remains to control (30). We may assume that , as the claim is trivial otherwise. It will suffice to obtain the bound

Using (31), it will suffice to show that

for each .

We now work with a single , and abbreviate

as

. To proceed further, we write

and

,

; it then suffices to show that

for each .

Henceforth we work with a single choice of . We pause to verify the relationship

From (31) and (21), this follows from the assertion that

but this follows from (5), (6) if is sufficiently small depending on

.

As is

-densely divisible, we may now factor

where

and thus

Factoring out , we may then write

where

and

By dyadic decomposition, it thus suffices to show that

whenever are such that

and

and

We rearrange this estimate as

for some bounded sequence which is only non-zero when

By Cauchy-Schwarz and crude estimates, it then suffices to show that

where is a coefficient sequence at scale

. The left-hand side may be bounded by

The contribution of the diagonal case is

by the divisor bound, which is acceptable since

. Thus it suffices to control the off-diagonal case

. Observe that for a given choice of

, the phase

either vanishes identically, or is equal to

for some quantities with

Also, by construction, ,

and

are

-densely divisible, so

is as well. (Here we use the fact that the least common multiple of two

-densely divisible numbers is again

-densely divisible, which follows from the more general fact that if

,

, and

is

-densely divisible, then

is also.) The condition

is either not satisfiable, or restricts

to a congruence class

for some

dividing

. We can thus apply Corollary 11 and bound

by

Bounding by

, we can thus bound the off-diagonal contribution to (34) by

which sums (using Lemma 5 of this previous post and the divisor bound) to

Discarding some factors of , we reduce to showing that

From (31), (21), (5) we have and

, so the previous estimate will be implied by

From (20), this will be implied by

or equivalently that

and

which by (6) is obeyed whenever

and

The second condition is implied by the first and may be deleted. The proof of Theorem 4 is now complete.

— 3. Type II estimate —

Now we prove Theorem 5. We repeat the Type I arguments through to (33) (noting that the hypothesis (6) is never used until that point, other than to ensure that ), thus we are again faced with the task of proving

This time, however, we do not have ; however we claim the weaker bound

Indeed by (31) this is equivalent to

and this follows from (20) and (5), (8).

With this weaker bound (35) we have to perform Cauchy-Schwarz differently. We rearrange the left-hand side as

for some bounded coefficients . Applying Cauchy-Schwarz, it then suffices to show that

The left-hand side may be bounded by

We isolate the diagonal case . By the divisor bound, the contribution of this case is

, which is acceptable by (35). So we now restrict attention to the off-diagonal case

. The phase

either vanishes identically, or takes the form

for some with

. By the second part of Corollary 11 we may thus bound the previous expression by

By the divisor bound and Lemma 5 of this previous post, this sums to

Discarding some factors of , it suffices to show that

From (31), (21), (5) we have and

, so the previous estimate will be implied by

From (20), this will be implied by

or equivalently that

and

which by (8) is obeyed whenever

and

The second condition is implied by the first and may be deleted. The proof of Theorem 5 is now complete.

— 4. Type III estimate —

We now prove Theorem 6. Let be as in the definition of

. We will not need the full strength of double dense divisibility here, and work instead with single dense divisibility. By a finer-than-dyadic decomposition (and using the Bombieri-Vinogradov theorem to handle small moduli), it suffices to show that

for some sufficiently small fixed and all

where .

Henceforth we work with a single choice of , and abbreviate the

summation as

. The left-hand side may then be written as

for some bounded sequence . So it suffices to show that

for some that is independent of

, as the claim then follows by averaging in

.

The left-hand side may be rewritten as

Note that for one has

By Fourier inversion we have

for all , where

is the Fourier transform

From the smoothness of , Poisson summation, and integration by parts we have the decay estimates

for any fixed and any

. More generally, we also have the derivative estimates

for any fixed and any

. We thus have

(say) when , where

Furthermore, for , we can perform a Taylor expansion around

and conclude that

for some fixed (depending on

), any

, and some coefficients

whose exact value will not be of importance to us. We may thus express (37), up to negligible errors, as the sum of a bounded number of expressions of the form

for some bounded sequences whose exact value will not be of importance to us other than their support, which is contained in the sets

and

respectively, and where

If we then introduce the modified hyper-Kloosterman sum

defined for and

, then our objective is now to show that

for some that does not depend on

, where

is the reciprocal of

in

and

.

We may rewrite as

. Observe that

is independent of

if one of

vanishes (as can be seen by dilating

), and similarly if

or

vanishes. Thus we may delete the case

from the above sum, and reduce to showing that

At this point we need to account for a technical problem that the may still share a common factor with

even after being restricted to be non-zero. For

, let

be the product of all the primes in

(counting multiplicity) that also divide

; thus

where

is coprime to

. As we shall see, the case

is dominant, and on a first reading one may wish to focus exclusively on this case in what follows to simplify the discussion. We then write

; this divides

, so we may write

. Note that as

is

-densely divisible,

is

-densely divisible, thus

.

Now we factor . From Lemma 8 we see that

For the second term, we observe that is coprime to

for

, and so by dilating the variables

we have

where is the residue class

and we recall that is the normalised hyper-Kloosterman sum

As for the first term, we have the following estimate:

Lemma 14 We have

where

(thus for instance

).

Proof: By further applications of Lemma 8 it suffices to show that

whenever is prime,

with

, and

.

Without loss of generality we may assume that , then we may rewrite

as

But this factors as the product of two Ramanujan sums divided by , and the claim then follows by direct computation.

For brevity we write for

. We may thus bound the left-hand side of (38) by

where the summations are over the ranges

Writing , so that

and recalling that , we may thus estimate the previous expression by

where is the quantity

where is the third divisor function and

We now focus on estimating .

be a quantity to optimise in later, where

and

Observe that every that appears in the expression for

is

-densely divisible and may thus be factored as

for some coprime

with

with . Thus we may write

where

From crude estimates we have

so from the Cauchy-Schwarz inequality we have

where

and is a smooth cutoff function supported on the interval

which equals one on

.

Now we estimate . We can expand this expression as

We first dispose of the diagonal case . Here we use the Deligne bound

to bound this case in magnitude by

By the divisor bound, for each there are

choices for

, so this expression is

which sums to .

Applying Lemma 13 for the off-diagonal case , we thus have

where

Using the bound , this becomes

By Lemma 5 from this previous post we have

and hence also

and thus

Similarly, we have

for all , so on summing over all

we have

We thus have

Writing ,

and

, we thus have

Performing the summation, this becomes

and then performing the summation we obtain

The net power of here is always at most

, so the

term in the summation dominates:

To optimise this in , we select

(The quantity comes from equating

and

.) By construction, we have the second inequality in (40). We also claim the first inequality, since this is equivalent to

which would follow if

But from (41) one has and

, and the claim now follows from (36) and (13).

Inserting this value of (using

for the first two terms in (43)), we conclude that

One should view the first term here as the main term. By (42), we conclude that

Since ,

, and

, we thus have

From Euler products we see that

and so to prove (39) it will suffice to show that

We can rewrite these conditions as upper bounds on :

As and

, we can rewrite these conditions as upper bounds on

:

Since and

, these conditions become

which we may rearrange as

but these follow from (12). The proof of Theorem 6 is complete.

94 comments

Comments feed for this article

8 July, 2013 at 12:13 am

David Roberts

(empty comment to subscribe to email updates)

8 July, 2013 at 12:37 am

g

Probably-clueless question here, motivated purely by aesthetics:

Clearly 2 is a gratuitously specific number :-). What happens if we replace the notion of “doubly dense divisibility” by, let’s call it, “hereditarily dense divisibility”: x is y-HDD iff either x=1 or in every interval [R/y,R] with 1 <= R <= x there is a factor of x that's also y-HDD. It seems like this is stronger than DDD but weaker than y-smoothness (but maybe intuition is deceptive here?).

8 July, 2013 at 9:21 am

Terence Tao

I think this property is equivalent to y-smoothness, since by setting R slightly less than x we see that every y-HDD number is the product of a number in (1,y] and another number which is y-HDD, and hence by induction is y-smooth. So we have a fairly continuous hierarchy of properties, from y-dense divisibility (the weakest), to double y-dense divisibility, to triple y-dense divisibility, …, all the way to hereditary y-dense divisibility or equivalently y-smoothness (the strongest property).

The sieving step can purchase us double y-dense divisibility for almost the same price as y-dense divisibility, but presumably the price keeps rising as we ask for more and more divisibility. For instance, with our current best values of we can purchase double dense divsibility at

we can purchase double dense divsibility at  (and single dense divisibility at more or less the same level), whereas smoothness requires

(and single dense divisibility at more or less the same level), whereas smoothness requires  to be 873 or so.

to be 873 or so.

There is a chance that we will need triple or higher dense divisibility if we start iterating the van der Corput process more often than we are doing now, but thus far this seems to have been counterproductive.

8 July, 2013 at 12:55 am

Lior Silberman

Is inequality (12) reversed? Otherwise large values of wouldn’t violate it.

wouldn’t violate it.

8 July, 2013 at 9:28 am

Terence Tao

No, I think the inequalities are the right way for the Type III estimates. The parameter demarcates the border between Type I and Type III; if one increases

demarcates the border between Type I and Type III; if one increases  , then we dump more cases into Type I (which then becomes harder to prove) but take more cases out of Type III (which becomes easier to prove). So the necessary conditions for Type I involve upper bounds on

, then we dump more cases into Type I (which then becomes harder to prove) but take more cases out of Type III (which becomes easier to prove). So the necessary conditions for Type I involve upper bounds on  while the necessary conditions for Type III involve lower bounds, which have to be balanced against each other (and with the combinatorial condition

while the necessary conditions for Type III involve lower bounds, which have to be balanced against each other (and with the combinatorial condition  ) to get the final range of

) to get the final range of  . (Actually, with our current technology, the combinatorial constraint is giving a stronger lower bound than the Type III estimates, so it is not currently a critical priority to try to improve the Type III estimates further.)

. (Actually, with our current technology, the combinatorial constraint is giving a stronger lower bound than the Type III estimates, so it is not currently a critical priority to try to improve the Type III estimates further.)

8 July, 2013 at 4:57 am

Eytan Paldi

Let denotes the number of consecutive prime pairs

denotes the number of consecutive prime pairs

satisfying

satisfying  . Since it is known that

. Since it is known that

, the next (natural) step is to give an explicit lower bound on the growth of

, the next (natural) step is to give an explicit lower bound on the growth of  for some

for some  , where

, where  is the number of consecutive prime pairs

is the number of consecutive prime pairs  satisfying

satisfying  .

.

(even. should be a great advance!)

should be a great advance!)

8 July, 2013 at 5:14 am

Eytan Paldi

In terms of as defined above, Zhang’s theorem is equivalent to

as defined above, Zhang’s theorem is equivalent to  for some

for some  .

.

8 July, 2013 at 6:00 am

Lior Silberman

I haven’t followed all the details, but isn’t the argument producing an H such that, for all x large enough, there is a pair of primes at distance at most H in the interval [x,2x]? That directly gives the bound .

.

8 July, 2013 at 6:30 am

Eytan Paldi

You are right of course! (it is interesting that even Bertrand’s postulate shows that Zhang’s theorem implies this seemingly stronger result.)

So perhaps the next step is to find a lower bound on the growth of P_2(x, H) as implied by the best known upper bound on the gap between consecutive primes.

8 July, 2013 at 9:34 am

Terence Tao

Dear Eytan,

You might be interested in this recent paper of Pintz at http://arxiv.org/abs/1305.6289 which discusses what results of this type one can get as consequences of Zhang’s theorem (or variants thereof). In particular, I think the bound of can be obtained from the sieve-theoretic arguments. The arguments of Goldston, Pintz, and Yildirim at http://arxiv.org/abs/1103.5886 should in principle be able to improve this, though I don’t know if we can get the optimal

can be obtained from the sieve-theoretic arguments. The arguments of Goldston, Pintz, and Yildirim at http://arxiv.org/abs/1103.5886 should in principle be able to improve this, though I don’t know if we can get the optimal  this way. This question is a little outside of the direct scope of the Polymath8 project, but perhaps someone will look into it.

this way. This question is a little outside of the direct scope of the Polymath8 project, but perhaps someone will look into it.

8 July, 2013 at 10:02 am

Eytan Paldi

Dear Terence, Thanks for the information ! (It seems that the conjecture that is sufficient to get

is sufficient to get  )

)

8 July, 2013 at 11:21 am

Gergely Harcos

@Eytan: Why would imply

imply  ?

?

8 July, 2013 at 2:08 pm

Eytan Paldi

Assuming the conjecture for some absolute constant

for some absolute constant  for each prime

for each prime  . My idea was to define the sequence

. My idea was to define the sequence  by

by  , starting with sufficiently large

, starting with sufficiently large  so each interval

so each interval  contains a pair of consecutive primes, and the number of such intervals below

contains a pair of consecutive primes, and the number of such intervals below

is

is  , but (thanks to Gergely question) I realized that the problem is that for each fixed H, the number of H-bounded prime gaps can grow arbitrarily slower than the number of such intervals below

, but (thanks to Gergely question) I realized that the problem is that for each fixed H, the number of H-bounded prime gaps can grow arbitrarily slower than the number of such intervals below  .

.

.

.

So it is not clear now how to get any explicit lower bound on the growth of

8 July, 2013 at 9:47 am

Eytan Paldi

In my previous comment (8 July, 4:57 am), the definition of should be the number of consecutive prime pairs

should be the number of consecutive prime pairs  satisfying

satisfying

.

.

8 July, 2013 at 5:30 am

M Flax

In the first formula of definition (2), should log(x) be log(n)?

8 July, 2013 at 12:56 pm

Pace Nielsen

No. By convention, is implicitly a function of

is implicitly a function of  .

.

8 July, 2013 at 8:57 am

Pace Nielsen

Since the current boundary is the the combinatorial bound , it makes sense to see what happens if we replace the approximation

, it makes sense to see what happens if we replace the approximation  in the Type III computations with the weaker

in the Type III computations with the weaker  , which avoids the combinatorial obstacles. If we do, the three lower bounds on

, which avoids the combinatorial obstacles. If we do, the three lower bounds on  that we obtain are

that we obtain are

and

and

It appears that there is room to rebalance these inequalities, but only the second one currently surpasses the obstacle.

obstacle.

It would also be interesting to rework the Type III computations but instead of working with three smooth functions use only one or two (with, respectively, ). We would then need to bound formulas involving

). We would then need to bound formulas involving  instead of

instead of  .

.

8 July, 2013 at 2:39 pm

Eytan Paldi

The pages “Dickson -Hardy-Littlewood theorems” and “Distribution of primes in smooth moduli” should be updated according to this post.

[Done, thanks for the suggestion – T.]

9 July, 2013 at 7:13 am

Eytan Paldi

More corrections:

1. In the page “Dickson-Hardy-Littlewood theorems”, in the last title MPZ should be replaced by MPZ”. ),

),  in the last section should be defined (as already suggested by Gergely Harcos) as the minimum between its current expression and 1.

in the last section should be defined (as already suggested by Gergely Harcos) as the minimum between its current expression and 1.

Also (to ensure that

2. In the page “Distribution of primes in smooth moduli”, in the second line MPZ” should also be mentioned. Also the definition of MPZ” should also be added as well as the definitions of and

and  .

.

[Corrected, thanks – T.]

9 July, 2013 at 9:50 am

Gergely Harcos

Let me clarify. Regarding Theorem 5 and its later variant of the earlier thread (https://terrytao.wordpress.com/2013/06/18/a-truncated-elementary-selberg-sieve-of-pintz/) I suggested that there was no need to redefine , because

, because  . The wiki page (http://michaelnielsen.org/polymath1/index.php?title=Dickson-Hardy-Littlewood_theorems) is a slightly different issue, because the Maple code there contains an upper bound for

. The wiki page (http://michaelnielsen.org/polymath1/index.php?title=Dickson-Hardy-Littlewood_theorems) is a slightly different issue, because the Maple code there contains an upper bound for  rather than its actual value. On the other hand, this upper bound is increasing in

rather than its actual value. On the other hand, this upper bound is increasing in  (it is the antiderivative of a nonnegative function), hence if

(it is the antiderivative of a nonnegative function), hence if  is admissible for some

is admissible for some  , then it is admissible for the redefined

, then it is admissible for the redefined  as well. This means that the Maple code worked fine in its original form (i.e. without taking the minimum of

as well. This means that the Maple code worked fine in its original form (i.e. without taking the minimum of  and

and  ), but of course it is more efficient in its current updated form.

), but of course it is more efficient in its current updated form.

9 July, 2013 at 11:08 am

Eytan Paldi

Thank you! (now I understand it better.)

8 July, 2013 at 9:00 pm

Pace Nielsen

I believe that in the Type I estimate bounds should be

in the Type I estimate bounds should be  .

.

[Sorry about this, actually the is I think correct, but in the display deriving it at the bottom of Section 2, I had an

is I think correct, but in the display deriving it at the bottom of Section 2, I had an  where there should have been a

where there should have been a  instead, which I’ve now corrected. -T.]

instead, which I’ve now corrected. -T.]

8 July, 2013 at 11:32 pm

James Hilferty

Is all this not a little fruitless and over complicated? I have a far simpler sum, namely the Black-Scholes formula. No one has actually disproved it and for a theorem which never worked at anytime just look at all the damage it caused to the financial system. I was just recently looking at JP Morgan’s “Var” formula and they admit that there is no accurate method at predicting the price “volatility” of a future’s option and you all are trying to do something similar with your new (?) probability theorem. What do you all think?

9 July, 2013 at 7:35 am

Anonymous

I admire your imagination but deplore your ignorance.

9 July, 2013 at 9:26 am

Terence Tao

As usual, I am recording the critical numerology, i.e. the endpoint case that we currently can’t treat with our methods. Setting for simplicity, this is

for simplicity, this is  and

and  . In the Heath-Brown decomposition, the enemy here is either a “Type IV” expression of the form

. In the Heath-Brown decomposition, the enemy here is either a “Type IV” expression of the form  where

where  are smooth and supported at scale

are smooth and supported at scale  and

and  is smooth and supported at scale

is smooth and supported at scale  , or a “Type V” sum of the form

, or a “Type V” sum of the form  where all of the

where all of the  are smooth and supported at scale

are smooth and supported at scale  . While it looks like the Type IV sum is treatable from the Type I method by exploiting the additional smoothness of

. While it looks like the Type IV sum is treatable from the Type I method by exploiting the additional smoothness of  in this case, it does not seem that this is available for the Type V sum, which is also far from being treatable by Type III methods.

in this case, it does not seem that this is available for the Type V sum, which is also far from being treatable by Type III methods.

If we attack the Type V sum by Type I methods, we factor it as where

where  is supported at scale

is supported at scale  , and

, and  is supported at scale

is supported at scale  . The modulus

. The modulus  has magnitude

has magnitude  and is factored as

and is factored as  , where

, where  (ignoring epsilons) and so

(ignoring epsilons) and so  . After various Cauchy-Schwarz type manipulations, our task is then to show that

. After various Cauchy-Schwarz type manipulations, our task is then to show that

where , and we may take

, and we may take  to be coprime as the dominant case (so

to be coprime as the dominant case (so  ). Thus we need to gain about

). Thus we need to gain about  over the trivial bound. There is also some additional averaging in the k and r parameters (the k averaging is over a negligible range, but r ranges over scales

over the trivial bound. There is also some additional averaging in the k and r parameters (the k averaging is over a negligible range, but r ranges over scales  and is potentially useful) but we currently do not know how to exploit this extra averaging. The phase

and is potentially useful) but we currently do not know how to exploit this extra averaging. The phase  is periodic with period

is periodic with period  .

.

The way we are currently treating the Type I sum, we are factoring with

with  and

and  . After Cauchy-Schwarz, we are reduced to showing that

. After Cauchy-Schwarz, we are reduced to showing that

thus we need to save a factor of over the trivial bound. Thus, on average, we need to obtain an exponential sum estimate of the form

over the trivial bound. Thus, on average, we need to obtain an exponential sum estimate of the form

where is an explicit but slightly messy phase of period

is an explicit but slightly messy phase of period  . With the particular choice of

. With the particular choice of  , we have

, we have  and

and  , so our objective is to prove something like

, so our objective is to prove something like

for an “average” phase of period

of period  (as a zeroth approximation, think of the model case

(as a zeroth approximation, think of the model case  where

where  ranges over a shortish interval, say of length

ranges over a shortish interval, say of length  ). This is currently being treated by the van der Corput estimate, which roughly has the shape

). This is currently being treated by the van der Corput estimate, which roughly has the shape

which doesn’t save the epsilon, and is ultimately why we just barely fail to make reach

reach  . So the basic challenge is to do better than (*) when

. So the basic challenge is to do better than (*) when  , possibly after exploiting the additional averaging in the

, possibly after exploiting the additional averaging in the  parameters that are available. But these parameters are hard to exploit: the k averaging is negligible, the

parameters that are available. But these parameters are hard to exploit: the k averaging is negligible, the  averages are substantial but affect the modulus which is bad, leaving only the

averages are substantial but affect the modulus which is bad, leaving only the  averaging which looks promising but is only over a fairly short range. A model problem would be to obtain a bound of the form

averaging which looks promising but is only over a fairly short range. A model problem would be to obtain a bound of the form

for a “typical” sufficiently smooth modulus , whatever “typical” means. (Here we are implicitly using smooth cutoffs where necessary, and adopting the convention that

, whatever “typical” means. (Here we are implicitly using smooth cutoffs where necessary, and adopting the convention that  vanishes when

vanishes when  is not coprime to

is not coprime to  .)

.)

9 July, 2013 at 10:40 am

Terence Tao

By chance I happened to attend a nice talk by Igor Shparlinski while at Budapest in which he gave a nice summary of the start of the art on how to estimate multidimensional incomplete exponential sums (though he focused primarily on the prime modulus case rather than the smooth modulus case). In addition to the standard technique of completion of sums, he also mentioned Vinogradov-type techniques (which are traditionally used to estimate exponential sums over the integers, e.g. for Waring’s problem or in bounding the zeta function, but can also give non-trivial results in finite fields), as well as the sum-product methods of Bourgain and co-authors (but this requires a lot of multiplicative structure), and also the Burgess type arguments (again this requires some sort of multiplicativity in the phase though).

Another route is to develop the q-van der Corput theory more fully, basically replicating the theory of exponent pairs in the q-setting. There are some exponent pairs that are known that are not obtainable from the standard A- and B- processes (e.g. the work of Huxley and Watt). These may end up being a bit complicated to execute though (if we do too many fancy manipulations, the dependence on is eventually going to hurt us; also, the Deligne-type exponential sum we will need to control at the end gets more and more complicated, though perhaps there is only a finite amount of algebraic geometry verification to be done for each specific application of this machinery).

is eventually going to hurt us; also, the Deligne-type exponential sum we will need to control at the end gets more and more complicated, though perhaps there is only a finite amount of algebraic geometry verification to be done for each specific application of this machinery).

One route that looks moderately promising to me is to try to combine the van der Corput A-process that we are currently using with the additional averaging in auxiliary parameters such as that we are currently unable to exploit (I called this a “Level 5” Type I estimate on the wiki). This should attenuate the diagonal contribution which should allow for some rebalancing of parameters that takes some of the load off of the off-diagonal contribution. (This trick already was used to good effect on the Type III sums.) I’ve tried to do this a few times in the last few days, but the problem has always been that the parameters being averaged over are over a shorter range than the difference

that we are currently unable to exploit (I called this a “Level 5” Type I estimate on the wiki). This should attenuate the diagonal contribution which should allow for some rebalancing of parameters that takes some of the load off of the off-diagonal contribution. (This trick already was used to good effect on the Type III sums.) I’ve tried to do this a few times in the last few days, but the problem has always been that the parameters being averaged over are over a shorter range than the difference  that one is Weyl differencing over so no additional gain could be extracted, but I did not exhaust all the possible permutations of this strategy.

that one is Weyl differencing over so no additional gain could be extracted, but I did not exhaust all the possible permutations of this strategy.

ADDED LATER: I forgot to add automorphic forms techniques (or “Kloostermania”) as a possible further technique which can exploit averaging in the modulus although once one moves away from the model problem and starts considering more realistic sums such as where

where  varies with

varies with  in a manner consistent with the Chinese remainder theorem, my understanding is that these methods become significantly more difficult to deploy.

in a manner consistent with the Chinese remainder theorem, my understanding is that these methods become significantly more difficult to deploy.

9 July, 2013 at 10:52 am

Pace Nielsen

Dear Terry,

Thank you for this analysis. I’ll try to absorb it more fully over the next week. In the meantime I did have a few questions (some perhaps easier to answer than others).

1. I’m a little weak on when it is allowable to use the Linnik decomposition rather than the Heath-Brown decomposition. Assuming that we can use the Linnik decomp., is there any extra information we gain that helps in these analyses? For instance, do we gain any insight into the scales involved in the coefficient sequences?

2. In your computations above, you take . Another option would be to take

. Another option would be to take  and try to take advantage of the smoothness of

and try to take advantage of the smoothness of  . Does the smoothness (and thus avoidance of an extra Cauchy-Schwarz) make up for the smaller scale size? Or is this just a pipe dream?

. Does the smoothness (and thus avoidance of an extra Cauchy-Schwarz) make up for the smaller scale size? Or is this just a pipe dream?

3. Perhaps a better way to take advantage of the smoothness would instead be to modify the Type III computations from to

to  or

or  (with

(with  of scale

of scale  ). This would replace bounds on

). This would replace bounds on  with needing bounds on

with needing bounds on  , which seem to be much more controllable. (Again, this might be a pipe dream.)

, which seem to be much more controllable. (Again, this might be a pipe dream.)

Best wishes,

Pace

9 July, 2013 at 7:54 pm

Terence Tao

Dear Pace,

1. Morally speaking the Linnik decomposition should work in these arguments, but it has infinitely many terms and there is basically no hope of controlling sums involving the divisor function with a really good dependence on k. Note for instance that

with a really good dependence on k. Note for instance that  is (conjecturally on the Mobius pseudorandomness conjecture) believed to be

is (conjecturally on the Mobius pseudorandomness conjecture) believed to be  for any fixed

for any fixed  (with the decay rate being highly non-uniform in

(with the decay rate being highly non-uniform in  ), while

), while  is certainly not

is certainly not  , so one can’t hope to use Linnik to get good estimates for

, so one can’t hope to use Linnik to get good estimates for  from good estimates for

from good estimates for  .

.

2. Let’s do a quick computation. If one halts the Type I argument before the final Cauchy-Schwarz, the objective in the critical case is to obtain an estimate roughly of the form

for some phase of period

of period  , where

, where  . If

. If  is smooth, one can use the completion of sums bound

is smooth, one can use the completion of sums bound

which suggests the constraint

which rearranges to

If we instead use the van der Corput bound

then we are led to the constraint

which becomes

This is a bit better than the current Type I constraint of , with

, with  , it allows us to take

, it allows us to take  as large as

as large as  when

when  is smooth, so we can basically handle any configuration

is smooth, so we can basically handle any configuration  of exponents with

of exponents with  . That’s not bad – it should dispose of most of the “Type IV” type sums we will encounter – but still quite far from dealing with the tuple

. That’s not bad – it should dispose of most of the “Type IV” type sums we will encounter – but still quite far from dealing with the tuple  which would basically require stretching

which would basically require stretching  to be as large as

to be as large as  .

.

If we apply the next iteration of van der Corput, this gives

leading to the constraint

which rearranges as

which, at , gets

, gets  as large as

as large as  , which is actually worse than what we got with just one van der Corput (which is consistent with the other times that we have tried iterated van der Corput, the gain in the

, which is actually worse than what we got with just one van der Corput (which is consistent with the other times that we have tried iterated van der Corput, the gain in the  aspect is generally outweighed by the loss in the

aspect is generally outweighed by the loss in the  aspect). So we’re not making much progress here towards stretching the Type I sums all the way to the

aspect). So we’re not making much progress here towards stretching the Type I sums all the way to the  case when

case when  is smooth.

is smooth.

One last numerology check: if we assume the most optimistic exponential sum bound (the Hooley conjecture)

one gets

which clears the bar with room to spare ( can now be as large as

can now be as large as  ). So there is hope, but it does require quite strong exponential sum estimates.

). So there is hope, but it does require quite strong exponential sum estimates.

3. I haven’t given this a shot yet, but I’ll take a look at the suggestion.

10 July, 2013 at 10:15 am

Pace Nielsen

Dear Terry,

Thank you for working this out. It appears that I wasn’t conveying myself well, since my question #2 was about a lower bound on , not upper bounds. However, your answer was still extremely helpful for me, and hopefully will help me explain my idea a bit better.

, not upper bounds. However, your answer was still extremely helpful for me, and hopefully will help me explain my idea a bit better.

For simplicity I’m going to ignore all the ‘s and

‘s and  ‘s.

‘s.

As you know, the combinatorial data gives a natural meeting place between the Type I/II and Type III sums, which can be thought of as given, respectively, by upper and lower bounds on . The Type III sums arise from consideration of how many smooth sequences have scales somewhere in the range

. The Type III sums arise from consideration of how many smooth sequences have scales somewhere in the range ![[x^{2\sigma},x^{1/2-\sigma}]](https://s0.wp.com/latex.php?latex=%5Bx%5E%7B2%5Csigma%7D%2Cx%5E%7B1%2F2-%5Csigma%7D%5D&bg=ffffff&fg=545454&s=0&c=20201002) . If there are too few (or too many) such sequences (and

. If there are too few (or too many) such sequences (and  ), then we can reduce to the Type I/II bounds. On the other hand, if there are exactly three sequences in this range, we can then either again reduce to the Type I/II bounds, or we have that the product of the three scales is at least

), then we can reduce to the Type I/II bounds. On the other hand, if there are exactly three sequences in this range, we can then either again reduce to the Type I/II bounds, or we have that the product of the three scales is at least  which we call the Type III case.

which we call the Type III case.

My idea is to forget about some of this information in exchange for weaker combinatorial restrictions. So, for example, when we are in the Type III situation I forget about the fact that there are three smooth sequences in the range![[x^{2\sigma},x^{1/2-\sigma}]](https://s0.wp.com/latex.php?latex=%5Bx%5E%7B2%5Csigma%7D%2Cx%5E%7B1%2F2-%5Csigma%7D%5D&bg=ffffff&fg=545454&s=0&c=20201002) for which the product of the scales is fairly large, and instead only retain the information that there is a single smooth sequence in the range. This is clearly going to lead to inequalities worse than the usual Type III bounds, but as the current restriction occurs between the Type I bounds and

for which the product of the scales is fairly large, and instead only retain the information that there is a single smooth sequence in the range. This is clearly going to lead to inequalities worse than the usual Type III bounds, but as the current restriction occurs between the Type I bounds and  , this might not be too bad.

, this might not be too bad.

So, now let's work through the numerology. We have . Similarly,

. Similarly,  since we are ignoring

since we are ignoring  . We also have

. We also have  , where

, where  . Finally,

. Finally,  . Now let's consider the four types of bounds from your post.

. Now let's consider the four types of bounds from your post.

————**Completion of sums**—————

We need . This leads to

. This leads to  . Hence

. Hence

This, in conjunction with the given Type I bound, yields which is worse than what we normally get.

which is worse than what we normally get.

————–**van der Corput once**—————-

We need . This leads to

. This leads to  . Hence

. Hence

This, in conjunction with the given Type I bound, yields .

.

—————–**van der Corput twice**—————-

We need . This leads to

. This leads to  . Hence

. Hence

This, in conjunction with the given Type I bound, yields , which is worse than the single van der Corput.

, which is worse than the single van der Corput.

Notice further that in all three cases we never surpass the bound.

bound.

—————-**Hooley Conjecture**———————

We need . This leads to

. This leads to  . Hence

. Hence

In this case, it appears that we can actually remove all of the Type I considerations and reduce to the Type II sums!

10 July, 2013 at 11:57 am

Terence Tao

In case you’re interested, the general form of the van der Corput estimate (assuming as much smoothness as needed) is

so for

for  (completion of sums),

(completion of sums),  for

for  (single van der Corput),

(single van der Corput),  for

for  (double van der Corput),

(double van der Corput),  for

for  , and so forth. I believe one would eventually push

, and so forth. I believe one would eventually push  down as low as desired by continually iterating van der Corput, but it becomes exponentially expensive in

down as low as desired by continually iterating van der Corput, but it becomes exponentially expensive in  to do so, so unfortunately it is not a net win. (However if one just wants to prove Zhang’s theorem and don’t care how small

to do so, so unfortunately it is not a net win. (However if one just wants to prove Zhang’s theorem and don’t care how small  (or

(or  ) has to be, I think we now have the technology to do so entirely using the Type II argument, and we could even replace the completion of sums bound

) has to be, I think we now have the technology to do so entirely using the Type II argument, and we could even replace the completion of sums bound  by the weaker, but more elementary, Kloosterman bound of

by the weaker, but more elementary, Kloosterman bound of  that avoids the Weil conjectures completely.)

that avoids the Weil conjectures completely.)

10 July, 2013 at 3:11 pm

Terence Tao

I played around a little with item 3, i.e. modifying the Type III computations to deal with or

or  instead of

instead of  . This is like asking for averaged distribution results for the trivial divisor function

. This is like asking for averaged distribution results for the trivial divisor function  or the classical divisor function

or the classical divisor function  rather than the third order divisor function

rather than the third order divisor function  . It turns out that while the distribution results for the former functions are indeed better than the latter, this is more than compensated by the fact that a much smaller portion of the total convolution is actually coming from the divisor function in these cases, so it doesn’t look like a win.

. It turns out that while the distribution results for the former functions are indeed better than the latter, this is more than compensated by the fact that a much smaller portion of the total convolution is actually coming from the divisor function in these cases, so it doesn’t look like a win.

To illustrate what is going on let us revert to “Level 3” Type III estimates that do not exploit the alpha averaging. Fouvry, Kowalski, Michel, and Nelson showed that has a level of distribution of 4/7 for smooth moduli, which roughly speaking means that they have good control on

has a level of distribution of 4/7 for smooth moduli, which roughly speaking means that they have good control on  on average for

on average for  . This allows one to get good distribution results for

. This allows one to get good distribution results for  for moduli

for moduli  , where each

, where each  is supported at scale

is supported at scale  , provided that

, provided that

Now suppose we work instead with . Here the classical result of Linnik and Selberg is that the level of distribution is at least 2/3. (Sketch of proof: one basically needs to estimate

. Here the classical result of Linnik and Selberg is that the level of distribution is at least 2/3. (Sketch of proof: one basically needs to estimate  to accuracy

to accuracy  when

when  . Assuming

. Assuming  , we can perform Fourier summation and reduce to estimating

, we can perform Fourier summation and reduce to estimating  to accuracy

to accuracy  , where

, where  is the normalised Kloosterman sum

is the normalised Kloosterman sum

If one then uses the Weyl bound (ignoring the zero frequency contribution

(ignoring the zero frequency contribution  ), we obtain the desired claim.) For smooth moduli, Fouvry and Iwaniec (and Katz) improved the 2/3 slightly to 2/3+1/48, and perhaps with Zhang's new arguments one can push this a bit further, but let's work with the 2/3 number for now. This gives good estimates for

), we obtain the desired claim.) For smooth moduli, Fouvry and Iwaniec (and Katz) improved the 2/3 slightly to 2/3+1/48, and perhaps with Zhang's new arguments one can push this a bit further, but let's work with the 2/3 number for now. This gives good estimates for  when

when

This constraint is better in that the 4/7 is improved to a 2/3, but worse in that we are only summing two of the instead of one. In the model case

instead of one. In the model case  we end up significantly worse off if we try to use this estimate.

we end up significantly worse off if we try to use this estimate.

Finally, if we work with , this trivially has level of distribution 1, so we obtain a good estimate for

, this trivially has level of distribution 1, so we obtain a good estimate for  when

when

This is the Type 0 estimate that we are already using. Again, the 4/7 or 2/3 factor has been improved to 1, but at the cost of now only using one of the , so this is not a win in the cases we need the most badly.

, so this is not a win in the cases we need the most badly.

Finally, for we do not have level of distribution results better than 1/2 for smooth moduli (though in principle the Type I/II/III estimates we have should give us something which is at least as good as the estimates we have for MPZ, and perhaps a little bit better because the pesky Type V sums don’t appear for

we do not have level of distribution results better than 1/2 for smooth moduli (though in principle the Type I/II/III estimates we have should give us something which is at least as good as the estimates we have for MPZ, and perhaps a little bit better because the pesky Type V sums don’t appear for  – actually, it might be worth having a look to see what numerology we can get for

– actually, it might be worth having a look to see what numerology we can get for  , as this may offer a benchmark as to what to hope to get for

, as this may offer a benchmark as to what to hope to get for  ), so we only get

), so we only get

which is in fact never satisfied for any positive .

.

The numerology changes a bit if we use the latest Type III estimates but I think the general picture is more or less the same.

10 July, 2013 at 4:41 pm

Pace Nielsen

Thanks once again for answering my questions. What seems surprising to me is how badly the constants work out in the case. Perhaps more surprising to me is that even though the Type III summation techniques have been optimized to improve the lower bound on

case. Perhaps more surprising to me is that even though the Type III summation techniques have been optimized to improve the lower bound on  , we seem to do better using the techniques from the Type I case when we are restricted to two sequences. That is, when trying to deal with sums involving

, we seem to do better using the techniques from the Type I case when we are restricted to two sequences. That is, when trying to deal with sums involving  with

with  smooth of scale

smooth of scale  , we get better bounds trivially modifying the Type I techniques rather than using the techniques for Type III. Unfortunately, even if it were the case that some methods from the Type I analysis made the Type III analysis turn out better, that isn’t the current point of conflict.

, we get better bounds trivially modifying the Type I techniques rather than using the techniques for Type III. Unfortunately, even if it were the case that some methods from the Type I analysis made the Type III analysis turn out better, that isn’t the current point of conflict.

Thank you for your very clear analysis. It appears that to make any headway on the Type V sums, we would need to improve the level of distribution for quite a bit (or to get Hooley’s conjecture and skip it all).

quite a bit (or to get Hooley’s conjecture and skip it all).

9 July, 2013 at 11:29 pm

Sniffnoy

Just a question from an interested onlooker: Everything since H=12006 has been marked “?”. What part of H=5414 is still unconfirmed?

10 July, 2013 at 8:26 am

Terence Tao

Basically, these later bounds rely on the Type I, II and III estimates described first in a number of scattered blog comments, and then finally compiled in one place in this blog post. So far, none of the other participants of the project have confirmed these estimates (they are somewhat lengthy, and there are a number of places where an arithmetic error could occur; I myself found a few minor ones when converting the comments to the blog format), but this will presumably happen eventually. (It’s not as if we are racing against time here.)

10 July, 2013 at 5:00 pm

Terence Tao

I think I figured out a way (in principle, at least, subject to the verification of some Deligne-level exponential sum estimates) to improve the van der Corput estimate

for smooth functions at some scale

at some scale  and some reasonable phase

and some reasonable phase  (e.g. the Kloosterman phase

(e.g. the Kloosterman phase  ) periodic with a smooth period

) periodic with a smooth period  , very slightly to

, very slightly to

if one is allowed to do some averaging in the q aspect, and if one ignores the role of . As far as the Type I sums are concerned, this would improve the constraint

. As far as the Type I sums are concerned, this would improve the constraint

(or equivalently, ) to

) to

(or equivalently ). Playing this against

). Playing this against  , this suggests that we can improve

, this suggests that we can improve  from

from  to

to  – not much, but better than nothing. (Using xfxie’s table at http://www.cs.cmu.edu/~xfxie/project/admissible/k0table.html , this should correspond to

– not much, but better than nothing. (Using xfxie’s table at http://www.cs.cmu.edu/~xfxie/project/admissible/k0table.html , this should correspond to  somewhere near 630, which by the prime tuples page at http://math.mit.edu/~primegaps/ suggests a value of

somewhere near 630, which by the prime tuples page at http://math.mit.edu/~primegaps/ suggests a value of  somewhere near 4660. But this is an extremely rough back-of-the-envelope calculation.)

somewhere near 4660. But this is an extremely rough back-of-the-envelope calculation.)

To explain the strategy, let me make the general remark that much of the game here is trying to estimate averaged exponential sums such as

where the are various weights at various scales

are various weights at various scales  and

and  is some explicit algebraic phase depending on

is some explicit algebraic phase depending on  ; also the modulus

; also the modulus  is also allowed to depend on one or more of the

is also allowed to depend on one or more of the  parameters. The various averages

parameters. The various averages  can be informally divided into various types:

can be informally divided into various types:

* “smooth” averages (in which is smooth) and “rough” averages (in which

is smooth) and “rough” averages (in which  has no smoothness).

has no smoothness).

* “long” averages (in which is large) and “short” averages (in which

is large) and “short” averages (in which  is small).

is small).

* “non-modulus-altering” averages (in which does not depend on

does not depend on  ) and “modulus-altering” averages (in which

) and “modulus-altering” averages (in which  does depend on

does depend on  ).

).

Basically, we want to have smooth long non-modulus-altering averages around, because there are a lot of techniques available (e.g. completion of sums) for exploiting such averaging. In contrast, averages that are rough, short, and/or modulus-altering are not easy to exploit directly and so often just have to be discarded through the triangle inequality. However, the Cauchy-Schwarz inequality and its variants (e.g. Weyl differencing, dispersion method, or Holder’s inequality) can often be used to convert a “bad” average to a “good” average, albeit at the cost of “square-rooting” any gain one gets (and often the modulus grows a little bit after one applies Cauchy-Schwarz). So the game is to use Cauchy-Schwarz as sparingly as possible to extract one or more “good” averages that one can then squeeze some cancellation out of (which, in all of our arguments so far, is only obtainable by using completion of sums combined with the Weil conjectures).

grows a little bit after one applies Cauchy-Schwarz). So the game is to use Cauchy-Schwarz as sparingly as possible to extract one or more “good” averages that one can then squeeze some cancellation out of (which, in all of our arguments so far, is only obtainable by using completion of sums combined with the Weil conjectures).

The point is that when one applies the van der Corput method once, there is a moderately long smooth average in a parameter which manages to not alter the modulus. After using Fourier inversion to deal with another long smooth average, we can then deal with the moderately long smooth average by a second van der Corput. (In principle one could keep iterating this procedure, but it is already rather complicated as it is so I won’t try to do so here.)

which manages to not alter the modulus. After using Fourier inversion to deal with another long smooth average, we can then deal with the moderately long smooth average by a second van der Corput. (In principle one could keep iterating this procedure, but it is already rather complicated as it is so I won’t try to do so here.)

OK, to the details. We will study the averaged sum

We factor where

where  ,

,  , and

, and  is to be optimised in later, though we assume we are in the regime

is to be optimised in later, though we assume we are in the regime  which is the regime of interest when applying van der Corput. We throw away the

which is the regime of interest when applying van der Corput. We throw away the  averaging and just average in

averaging and just average in  , thus we need to bound

, thus we need to bound

for a given .

.

We perform Weyl differencing in the direction and end up staring at

direction and end up staring at

Pulling out the n summation from the absolute values and then applying Cauchy-Schwarz, we can bound this by

The diagonal term contributes

contributes  . For the off-diagonal terms, we crucially note (as in previous applications of van der Corput) that the

. For the off-diagonal terms, we crucially note (as in previous applications of van der Corput) that the  component of the phases cancel, and the phase

component of the phases cancel, and the phase  collapses to something like

collapses to something like  , so we are now looking at

, so we are now looking at

for the off-diagonal contribution, where is some smooth cutoff function whose exact form is not too important here. If we estimate this by completion of sums, we get an additional term of

is some smooth cutoff function whose exact form is not too important here. If we estimate this by completion of sums, we get an additional term of  , giving the van der Corput bound of

, giving the van der Corput bound of  which optimises to

which optimises to  as before. To do better than this, we first deal with the n summation by Fourier inversion, rewriting the above as something like

as before. To do better than this, we first deal with the n summation by Fourier inversion, rewriting the above as something like

where is the Kloosterman-type sum

is the Kloosterman-type sum

The point is that the summation is a moderately long smooth average (of length vaguely comparable to

summation is a moderately long smooth average (of length vaguely comparable to  or so) that does not affect the modulus of

or so) that does not affect the modulus of  , and so (assuming certain Deligne-level estimates are OK) we expect a van der Corput bound of the form

, and so (assuming certain Deligne-level estimates are OK) we expect a van der Corput bound of the form

which if one does the arithmetic gives a bound of for the off-diagonal terms. We have thus bounded the original exponential sum by

for the off-diagonal terms. We have thus bounded the original exponential sum by

which optimises to as claimed by setting

as claimed by setting  and

and  .

.

10 July, 2013 at 5:37 pm

Anonymous

Terry, Let’s do a trade, I go to ucla to learn math from you, you learn Cantonese from me, OK?

10 July, 2013 at 7:38 pm