Ben Green and I have just uploaded our paper “The quantitative behaviour of polynomial orbits on nilmanifolds” to the arXiv (and shortly to be submitted to a journal, once a companion paper is finished). This paper grew out of our efforts to prove the Möbius and Nilsequences conjecture MN(s) from our earlier paper, which has applications to counting various linear patterns in primes (Dickson’s conjecture). These efforts were successful – as the companion paper will reveal – but it turned out that in order to establish this number-theoretic conjecture, we had to first establish a purely dynamical quantitative result about polynomial sequences in nilmanifolds, very much in the spirit of the celebrated theorems of Marina Ratner on unipotent flows; I plan to discuss her theorems in more detail in a followup post to this one.In this post I will not discuss the number-theoretic applications or the connections with Ratner’s theorem, and instead describe our result from a slightly different viewpoint, starting from some very simple examples and gradually moving to the general situation considered in our paper.

To begin with, consider a infinite linear sequence in the unit circle

, where

. (One can think of this sequence as the orbit of

under the action of the shift operator

on the unit circle.) This sequence can do one of two things:

- If

is rational, then the sequence

is periodic and thus only takes on finitely many values.

- If

is irrational, then the sequence

is dense in

. In fact, it is not just dense, it is equidistributed, or equivalently that

for all continuous functions

. This statement is known as the equidistribution theorem.

We thus see that infinite linear sequences exhibit a sharp dichotomy in behaviour between periodicity and equidistribution; intermediate scenarios, such as concentration on a fractal set (such as a Cantor set), do not occur with linear sequences. This dichotomy between structure and randomness is in stark contrast to exponential sequences such as , which can exhibit an extremely wide spectrum of behaviours. For instance, the question of whether

is equidistributed mod 1 is an old unsolved problem, equivalent to asking whether

is normal base 10.

Intermediate between linear sequences and exponential sequences are polynomial sequences , where P is a polynomial with coefficients in

. A famous theorem of Weyl asserts that infinite polynomial sequences enjoy the same dichotomy as their linear counterparts, namely that they are either periodic (which occurs when all non-constant coefficients are rational) or equidistributed (which occurs when at least one non-constant coefficient is irrational). Thus for instance the fractional parts

of

are equidistributed modulo 1. This theorem is proven by Fourier analysis combined with non-trivial bounds on Weyl sums.

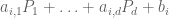

For our applications, we are interested in strengthening these results in two directions. Firstly, we wish to generalise from polynomial sequences in the circle to polynomial sequences

in other homogeneous spaces, in particular nilmanifolds. Secondly, we need quantitative equidistribution results for finite orbits

rather than qualitative equidistribution for infinite orbits

.

Before we extend to nilmanifolds, let us briefly review what happens for higher-dimensional torii . From the theory of Weyl sums, one can show that an infinite polynomial sequence

in a torus is either equidistributed, or is contained in a finite union of proper subtorii (cf. Kronecker’s theorem in the case when P is linear). Iterating this, we get a Ratner-type theorem for the torus, namely that every infinite polynomial sequence in a torus is equidistributed within a finite union of subtorii.

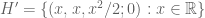

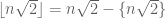

It turns out that a similar Ratner-type result holds for nilmanifolds, and is due to Leibman (with some earlier results in this direction by Leon Green, by Parry, and by Shah). Recall that a nilmanifold is a quotient space , where G is a nilpotent Lie group (which for simplicity we shall take to be connected and simply connected, although these restrictions can be removed with a bit of effort), and

is a discrete cocompact subgroup. A good example is the Heisenberg nilmanifold

There is a well-defined notion of a polynomial sequence in G – a sequence which becomes trivial after finitely many applications of the differentiation operator

, defined as

. Leibman showed that an infinite polynomial sequence

is either equidistributed in the nilmanifold

, or is else contained in a finite union of proper subnilmanifolds; iterating this, one can conclude that every infinite polynomial sequence is equidistributed in a finite union of nilmanifolds. One can also phrase this as a factorisation theorem: every polynomial sequence is the product of a constant sequence, a sequence equidistributed in a nilmanifold, and a periodic (and rational) sequence.

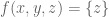

A typical application of Leibman’s theorem would be the assertion that for any real numbers , the sequence

is either periodic, or equidistributed modulo 1, where

is the integer part of x (except in some rare but explicitly describable cases, when

lie in a quadratic extension of

, in which one has a different distribution; see comments). I do not know of a way to prove this result which does not basically require one to prove a large part of Leibman’s theorem.

Leibman first proves his theorem in the linear case by heavy reliance on the ergodic theorem, an induction on the step of the nilmanifold, and some Fourier analysis (or representation theory) to handle the “vertical” behaviour of the nilmanifold, using some arguments of Parry. He then passes to the polynomial case by using a lifting trick of Furstenberg to linearise the sequence.

Extensions of these results beyond nilmanifolds, and in particular to finite volume homogeneous spaces, are certainly of interest (see for instance this article of Margulis) but are considerably more difficult, as one loses the ability to work by induction from the abelian case.

Now we turn to quantitative versions of the above statements, in which we look at the equidistribution properties of a finite polynomial sequence . One can define the notion of a finite sequence being equidistributed up to some error

, which roughly means that irregularities in the sequence can only be detected at scales

or below. Our first main result is that a finite polynomial sequence is either equidistributed up to error

, or is else concentrated within

of

proper subnilmanifolds, whose “slopes” have height

. One can iterate this and conclude a Ratner-type theorem, namely that every finite polynomial sequence is within

of being equidistributed on a polynomial number of proper subnilmanifolds, whose slopes also have polynomially bounded heights. These polynomial bounds turn out to be important for our number theoretic applications, which will be discussed in a subsequent post. There is also a factorisation theorem, which asserts that every polynomial sequence can be expressed as the product of a slowly varying sequence, a sequence which is equidistributed in a nilmanifold, and a periodic rational sequence, with quantitative polynomial bounds on all of these assertions.

To illustrate our result with a concrete instance, we can assert that for any real numbers , any error tolerance

, and any large integer, one can subdivide

into arithmetic progressions, each of density

, such that

is either within

of a constant, or is

-equidistributed, or is the push-forward of a

-equidistributed sequence by a quadratic polynomial with coefficients

(this latter case is technical, and rather rare, having to do with special subnilmanifolds of the Heisenberg nilmanifold).

One of the key difficulties in working in the quantitative setting (especially when one is insisting on polynomial bounds everywhere, and when N is fixed in advance) is that one can no longer use the ergodic theorem (unless one has quantitative control on the ergodicity, such as spectral gaps, which appear to be unavailable in this setting). Because of this, we were eventually forced to find a different approach than Leibman’s to these problems, and ended up with one based primarily on Fourier analysis in the vertical direction combined with the van der Corput inequality (very much in the spirit of the Weyl’s theory of equidistribution and exponential sums). Another new feature of the quantitative setting is the presence of error terms: a sequence may not lie exactly in a subnilmanifold, but instead deviates from it by a small error. This error can be eliminated, but often at the cost of increasing the “degree” (or other measures of “complexity”) of the polynomial sequence . This makes it far from obvious that iterative arguments guaranteed to terminate; it also seems to prevent one from using the Furstenberg lifting trick to linearise a sequence in the middle of an iteration argument, and so we were forced to work directly with polynomial sequences. For similar reasons, we had to perform a fair amount of algebraic computation to set up a good notion of the “complexity” of a polynomial sequence, which decreased with every stage of the main iteration argument. (In the end, this complexity is modeled by three parameters: the step s of the nilmanifold, the “commutator degree” D of the sequence g(n), and the “nonlinearity dimension” of the coefficient spaces for g(n), thus leading to a triple induction on these parameters in order to conclude the argument.)

[Update, Sep 26: inaccuracies with the example fixed; thanks to Ben Green for the corrections.]

53 comments

Comments feed for this article

25 September, 2007 at 9:07 pm

Ben Green

Terry,

Curiously enough I’ve just had my attention drawn to a paper of Bergelson and Leibman where they remark that![n\sqrt{2}[n\sqrt{2}]](https://s0.wp.com/latex.php?latex=n%5Csqrt%7B2%7D%5Bn%5Csqrt%7B2%7D%5D&bg=ffffff&fg=545454&s=0&c=20201002) is *not* equidistributed modulo 1 (and it certainly isn’t periodic). If you replace square roots by cube roots, or in fact if you replace

is *not* equidistributed modulo 1 (and it certainly isn’t periodic). If you replace square roots by cube roots, or in fact if you replace  by more-or-less any other irrational number, you do get equidistribution. The complete theory was worked out in some papers of Inger Haland.

by more-or-less any other irrational number, you do get equidistribution. The complete theory was worked out in some papers of Inger Haland.

This doesn’t contradict your post in any serious way (or our paper :-)) but it is fun to try and understand this phenomenon in terms of nilmanifolds.

The thing is that![p(n) = n\sqrt{2}[n\sqrt{2}]](https://s0.wp.com/latex.php?latex=p%28n%29+%3D+n%5Csqrt%7B2%7D%5Bn%5Csqrt%7B2%7D%5D&bg=ffffff&fg=545454&s=0&c=20201002) can be viewed as coming from a polynomial sequence on a certain 4-dimensional, two step, nilmanifold G/L, where G is the product of a Heisnberg group and

can be viewed as coming from a polynomial sequence on a certain 4-dimensional, two step, nilmanifold G/L, where G is the product of a Heisnberg group and  , and L is the obvious lattice. The polynomial sequence is the map

, and L is the obvious lattice. The polynomial sequence is the map  given by

given by  , and then our bracket polynomial p(n) is just f(g(n)L), where f(x,y,z;t) = z – t (and I have identified G/L with the fundamental domain

, and then our bracket polynomial p(n) is just f(g(n)L), where f(x,y,z;t) = z – t (and I have identified G/L with the fundamental domain ![{}[-1/2,1/2]^4](https://s0.wp.com/latex.php?latex=%7B%7D%5B-1%2F2%2C1%2F2%5D%5E4&bg=ffffff&fg=545454&s=0&c=20201002) ).

).

Now that polynomial sequence lies inside a 2-dimensional closed subgroup H of G, namely , but the y coordinate is always an integer. So when you mod out by L you find that g(n)L lies inside H’/L, where

, but the y coordinate is always an integer. So when you mod out by L you find that g(n)L lies inside H’/L, where  . By our theorem it is equidistributed inside that group.

. By our theorem it is equidistributed inside that group.

The point is that the map f does not send the natural measure on H’/L to Lebesgue measure on [-1/2,1/2] (Bergelson and Leibman calculate this measure explicitly – this isn’t too difficult, but I won’t do it here).

It *does* send the natural measure on H/L to Lebesgue measure, because![n\alpha[n\alpha]](https://s0.wp.com/latex.php?latex=n%5Calpha%5Bn%5Calpha%5D&bg=ffffff&fg=545454&s=0&c=20201002) is equidistributed mod 1 when alpha is the cube root of two.

is equidistributed mod 1 when alpha is the cube root of two.

that freely varying y essentially takes that weird measure I just mentioned and convolves it with Lebsegue measure, thus producing Lebesgue measure again. This explains why this weird phenomenon is quite rare, and in particular why

Ben

26 September, 2007 at 8:53 am

Terence Tao

Dear Ben,

Very nice! That was a subtlety I had not appreciated before. (For , one can explain the phenomenon by observing the identity

, one can explain the phenomenon by observing the identity  , which can be verified by writing

, which can be verified by writing  and expanding out the fact that

and expanding out the fact that  is an integer.)

is an integer.)

Incidentally, one does not need the additional torus adjoined to the Heisenberg nilmanifold to interpret the example; one can consider the polynomial sequence in the Heisenberg nilmanifold and take

in the Heisenberg nilmanifold and take  to obtain the same effect.

to obtain the same effect.

I see where I went wrong now – the three-dimensional Heisenberg nilmanifold does contain some interesting subnilmanifolds, such as , which as you say have a different pushforward under f than the 0-dimensional or full-dimensional subnilmanifolds do. I’ll reword the post appropriately.

, which as you say have a different pushforward under f than the 0-dimensional or full-dimensional subnilmanifolds do. I’ll reword the post appropriately.

26 September, 2007 at 9:08 am

Ben Green

Terry,

I was working inside the 4-dimensional thing because one can understand![\alpha n [\alpha n]](https://s0.wp.com/latex.php?latex=%5Calpha+n+%5B%5Calpha+n%5D&bg=ffffff&fg=545454&s=0&c=20201002) there, whereas that doesn’t seem to come from something on the Heisenberg without the additional torus (though I prepare to be corrected….)

there, whereas that doesn’t seem to come from something on the Heisenberg without the additional torus (though I prepare to be corrected….)

I think your proof that![n\sqrt{2}[n\sqrt{2}]](https://s0.wp.com/latex.php?latex=n%5Csqrt%7B2%7D%5Bn%5Csqrt%7B2%7D%5D&bg=ffffff&fg=545454&s=0&c=20201002) isn’t equidistributed gets to the heart of the matter rather more swiftly than mine :-) Takes some of the mystery away.

isn’t equidistributed gets to the heart of the matter rather more swiftly than mine :-) Takes some of the mystery away.

Ben

26 September, 2007 at 9:40 am

Terence Tao

Dear Ben,

One way to view Ratner’s theorem and its relatives is that the only obstructions to equidistribution of unipotent flows are algebraic ones. So if something like is failing to be equidistributed modulo 1, there has to be some short algebraic explanation of that fact. It’s sort of a “proof theory” for equidistribution, if you will.

is failing to be equidistributed modulo 1, there has to be some short algebraic explanation of that fact. It’s sort of a “proof theory” for equidistribution, if you will.

Regarding how to embed things into the Heisenberg nilmanifold, let me write [x,y,z] for the upper triangular matrix . Then G is the space of all triples [x,y,z] with real entries, and

. Then G is the space of all triples [x,y,z] with real entries, and  can be identified with triples with entries between -1/2 and 1/2, with an element [x,y,z] of G projecting to an element

can be identified with triples with entries between -1/2 and 1/2, with an element [x,y,z] of G projecting to an element ![{}[ \{ x \}, \{y\}, \{ z - x\lfloor y \rfloor\} ]](https://s0.wp.com/latex.php?latex=%7B%7D%5B+%5C%7B+x+%5C%7D%2C+%5C%7By%5C%7D%2C+%5C%7B+z+-+x%5Clfloor+y+%5Crfloor%5C%7D+%5D&bg=ffffff&fg=545454&s=0&c=20201002) of

of  . In particular, the polynomial sequence

. In particular, the polynomial sequence ![{}[n\sqrt{2}, n\sqrt{2}, 0]](https://s0.wp.com/latex.php?latex=%7B%7D%5Bn%5Csqrt%7B2%7D%2C+n%5Csqrt%7B2%7D%2C+0%5D&bg=ffffff&fg=545454&s=0&c=20201002) in G projects to the element

in G projects to the element ![{}[ \{ n\sqrt{2}\}, \{ n \sqrt{2}\}, -\{ n \sqrt{2} \lfloor n \sqrt{2}\rfloor \} ]](https://s0.wp.com/latex.php?latex=%7B%7D%5B+%5C%7B+n%5Csqrt%7B2%7D%5C%7D%2C+%5C%7B+n+%5Csqrt%7B2%7D%5C%7D%2C+-%5C%7B+n+%5Csqrt%7B2%7D+%5Clfloor+n+%5Csqrt%7B2%7D%5Crfloor+%5C%7D+%5D&bg=ffffff&fg=545454&s=0&c=20201002) in

in  . This element does not range freely there, but is constrained to the subnilmanifold

. This element does not range freely there, but is constrained to the subnilmanifold ![G'/\Gamma' := \{ [x,x,z]: -1/2 < x \leq 1/2; 2z = x^2 \hbox{ mod } 1 \}](https://s0.wp.com/latex.php?latex=G%27%2F%5CGamma%27+%3A%3D+%5C%7B+%5Bx%2Cx%2Cz%5D%3A+-1%2F2+%3C+x+%5Cleq+1%2F2%3B+2z+%3D+x%5E2+%5Chbox%7B+mod+%7D+1+%5C%7D&bg=ffffff&fg=545454&s=0&c=20201002) , which is also the orbit of the one-dimensional subgroup

, which is also the orbit of the one-dimensional subgroup ![G' := \{ [x,x,x^2/2]: x \in {\Bbb R} \}](https://s0.wp.com/latex.php?latex=G%27+%3A%3D+%5C%7B+%5Bx%2Cx%2Cx%5E2%2F2%5D%3A+x+%5Cin+%7B%5CBbb+R%7D+%5C%7D&bg=ffffff&fg=545454&s=0&c=20201002) . Of course, it was by locating this subnilmanifold that I found the algebraic relation alluded to above.

. Of course, it was by locating this subnilmanifold that I found the algebraic relation alluded to above.

One thing this teaches me is that I should do more explicit calculations. Somehow I managed to write my half of our paper while working entirely with abstract nilmanifolds in mind (or at a pinch, abstract 1-step and 2-step nilmanifolds).

26 September, 2007 at 10:27 am

Emmanuel Kowalski

I find both Ratner’s results and this new result of yours somewhat reminiscent (in flavor) of Deligne’s equidistribution theorem over finite fields. In all three cases, some sequence (or finite sets of Frobenius conjugacy classes, in Deligne’s case, because these sets are not naturally ordered) is shown to become equidistributed in “something”. Many problems can then be transferred to the (often easier) issue of knowing what the something is, but there definitely exist situations where the “something” is not as simple as the “generic” answer.

Deligne’s work is even more algebraic since equidistribution holds in a group, not in a manifold or orbit of some kind. Also, his work turns out to be surprisingly suitable for (strong) quantitative statements. (Partly because it is obtained by direct harmonic analysis using finite-dimensional representations of compact groups, and partly because of the always amazing effect of the Riemann Hypothesis).

I should also say that I’m looking forward to reading your next paper on the general form of the Möbius and Nilsequences Conjecture…

26 September, 2007 at 12:20 pm

Gaspard

Dear Terry and Ben,

if I understand well, this proof of the Möbius and Nilsequences conjecture MN(s) means your previous conditionnal proof of Dickson’s conjecture in the non-binary case is now stronger and relies “only” on the Gowers Inverse GI(s) conjecture.

I was wondering if during this recent work you also gained knowledge on both the GI(s) conjecture and maybe on the binary case too. Thank you. (I’m just an outsider trying to assess how hard the Twin Prime and Golbach conjectures actually are, if I ever have to mention them in a lecture.)

26 September, 2007 at 12:45 pm

Adam

The Twin Prime conjecture has great educational value, as it makes

an impression on any kid who has learned division. It makes one wonder

why it is not on the Clay Institute list. I once asked my closest

collaborator what he thought about it, but he hasn’t replied yet.

26 September, 2007 at 2:18 pm

Terence Tao

Dear Emmanuel: Thanks for the reference! One day I plan to go through Deligne’s Weil papers, clearly there is a lot of amazing stuff in there…

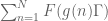

Dear Gaspard: You are correct, the only remaining obstacle to the non-binary Dickson conjecture is now the proof of the Gowers inverse conjecture GI(s). It looks like the Ratner-type theorem in this paper will also be useful for that conjecture (very roughly speaking, just as Fourier analysis is based on the ability to evaluate exponential sums such as , the “higher order Fourier analysis” which presumably underlies GI(s) should be based on the ability to evaluate sums such as

, the “higher order Fourier analysis” which presumably underlies GI(s) should be based on the ability to evaluate sums such as  , and this is now (in principle) achievable thanks to these Ratner-type theorems) but there are some additional issues (coming from additive combinatorics) that Ben and I are still trying to understand properly. We’re definitely looking at it, though…

, and this is now (in principle) achievable thanks to these Ratner-type theorems) but there are some additional issues (coming from additive combinatorics) that Ben and I are still trying to understand properly. We’re definitely looking at it, though…

There is a very slight chance that our methods may one day extend to the binary case, but it would require some substantial new breakthroughs; so far, everything we do requires averaging over at least two independent parameters, whereas in problems such as the twin prime and Goldbach conjectures, there is only one parameter to average over. This appears to be a fundamental limitation to these methods, but of course the addition of a powerful new idea could change the game entirely…

Dear Adam: I believe the Clay problems were selected not only for difficulty, but for the impact that their solution (or the search for a solution) would have on mathematical research (and possibly to applications as well, especially in the case of P=NP, and perhaps also Navier-Stokes). This is more or less orthogonal to how accessible the problem would be to, say, a high-school student (though I think at least 5 of the 7 problems can be explained in general terms to a such a student – I did exactly this for a few years ago, and something similar for the Riemann Hypothesis last year). The Riemann hypothesis and the BSD conjecture have an enormous number of implications within number theory, and the partial results we already have on these problems has already generated many deep insights and powerful new techniques; a solution to the twin prime conjecture would achieve some of this, but on a substantially lesser scale. Also, the twin prime conjecture appears (to me, at least), to be marginally less impossible to solve than the other two (although with the current rate of progress in arithmetic geometry, it is entirely conceivable that BSD will not look as unsolvable in a few decades as it does right now).

a few years ago, and something similar for the Riemann Hypothesis last year). The Riemann hypothesis and the BSD conjecture have an enormous number of implications within number theory, and the partial results we already have on these problems has already generated many deep insights and powerful new techniques; a solution to the twin prime conjecture would achieve some of this, but on a substantially lesser scale. Also, the twin prime conjecture appears (to me, at least), to be marginally less impossible to solve than the other two (although with the current rate of progress in arithmetic geometry, it is entirely conceivable that BSD will not look as unsolvable in a few decades as it does right now).

27 September, 2007 at 7:47 am

Emmanuel Kowalski

The difficulty of the twin-prime problem compared to the current best results in additive prime number theory may be somewhat suggested by the fact that the best result of the type

(Known widely believed conjecture) implies (Twin Prime-like Conjecture)

which is currently available is the consequence of the work of Goldston-Pintz-Yildirim according to which the Elliott-Halberstam conjecture implies that for some (small, less than 16 I think currently) fixed even integer $k$, there are infinitely many primes $p$ for which $p+k$ is also prime. The E-H conjecture is (at least in appearance) much stronger than what can be derived from the Generalized Riemann Hypothesis for all Dirichlet L-functions. Moreover, this conjecture is clearly borderline; more optimistic statements that might seem just as reasonable are known to be false by work of Friedlander and Granville, suggested by earlier works of Maier on primes in short intervals.

This is to be contrasted with the results about the ternary Goldbach problem, or 3 or 4-terms arithmetic progressions (and hopefully soon $k$-terms A.P., $k>2$) by Vinogradov, van der Corput, and of course Green-Tao, which rely “only”, as far as the precise asymptotic distribution of primes is concerned, on the Siegel-Walfisz theorem, which in turns “only” depends on the non-existence of zeros of L-functions at distance roughly $c/log q$ of the line $Re(s)=1$ (where $q$ is the conductor, and I’m glossing over the exceptional zero, which I shouldn’t…)

(And I should say all this is as far as I know; there’s enormous literature on these problems, so I may be unaware of other conditional approaches to twin prime problems; also I think I didn’t misread the papers of Ben and Terry concerning the amount of “prime distribution” they use, but I’m not the expert on those…).

27 September, 2007 at 8:39 am

Adam

Dear Emmanuel,

Was the implication you mention established by Hardy and Littlewood?

27 September, 2007 at 8:46 am

Emmanuel Kowalski

No, the implication is very recent: it’s part of the work of Goldston, Pintz and Yildirim, see for instance this preprint.

27 September, 2007 at 9:08 am

Adam

Then see:

Cherwell, E. M. Wright, The frequency of prime-patterns, Quart. J. Math.

Oxford Ser. (2) 11 (1960), 60-63, and what the authors claim in that

paper. Wright co-authored a book with Hardy on prime numbers.

27 September, 2007 at 9:40 am

Terence Tao

Dear Adam: I think you are referring to the Hardy-Littlewood prime tuples conjecture, which does indeed imply the twin prime conjecture (but is much more general). But this is not really a deep reduction, the same way the Goldston-Yildirim-Pintz result is, because the HL conjecture is “on the same level” of complexity as the twin prime conjecture (it is a correlation estimate between primes and shifted primes), whereas the EH conjecture is a correlation estimate between primes and a simpler object, namely generic arithmetic progressions, and is thus simpler (the fewer primes are involved, the easier it is to actually estimate things). As Emmanuel says, the EH is in some sense a “super-GRH” and is thus looks wildly optimistic, on the other hand there is an additional averaging which does allow one to get EH-type estimates of comparable strength to GRH (the Bombieri-Vinogradov inequality) and there are also hints that one can do even better in some cases. I plan to write a little more about the Elliot-Halberstam conjecture on this blog at some point…

Emmanuel: You are correct, the only information about the distribution of primes we use in our linear equations in primes paper comes from Siegel-Walfisz. In our first paper we use almost nothing; one even can get by using Chebyshev’s level of understanding of the primes (basically, that zeta has a pole at s=1). [We use Dirichlet’s theorem in our paper, but one could substitute that by just the pigeonhole principle, as we only need one congruence class with a lot of primes in it.]

27 September, 2007 at 9:44 am

Adam

Dear Terry,

I will go to the library and do some reading, to be on the safe side.

To me, TPC has a “hard analysis” flavour, in constrast to your

arithmetic progressions in the primes result, which looks like “medium”.

If I were interested in it, I would use the new Perelman idea of

looking into appropriate physics theories for getting oneself

into the right mindset (however, it is quite hard to find a quiet job).

If one believes in some self-similarity in nature, maybe some inspiration

can be found in particle physics (bamboo bundles are good too

– for giant pandas). The great J. M. Hammersley called it “getting

to grips with a good problem”:

http://www.statslab.cam.ac.uk/~grg/papers/ham1-ph.html

The search for the solution of the TPC has had quite an impact on

applied mathematics. Lord Cherwell considered a probabilistic heuristic

derivation, and in the process derived the equation

y'(x) = – ln2 y(x-1) [1+y(x)].

A generalization with a in place of ln2 was studied in the classical:

E. M. Wright, A non-linear difference-differential equation, J. Reine

Angew. Math. 194 (1955), 66-87,

and was later rediscovered in mathematical biology, where they call

such equations delayed ones. Interestingly, a longstanding open

problem in this area was recently completely solved (on a few pages)

with the help of Ikehara’s Theorem, a cult result in analytic number

theory, which gives the fastest proof of the Prime Number Theorem

(the biggest problem of the 19th century), in which one doesn’t need

to use the Fourier Transform on the line Re z = 1.

Those interested in such recent results are referred to the literature.

They are easy to check for errors, for those who understand calculus,

but a little harder to rediscover.

27 September, 2007 at 10:59 am

Emmanuel Kowalski

The prime $k$-tuples conjecture is indeed not distinct enough from the twin prime conjecture that any implication could be considered “evidence” towards the latter. On the other hand, it is by means of an attack on a weakening of the $k$-tuples conjecture that Goldston, Pintz and Yildirim succeeded in proving their results (which, in addition to the conditional one I mentioned include the proof that

$$\liminf (p_{n+1)-p_n)/(\log p_n)=0$$

which uses the Bombieri-Vinogradov theorem, or in other words information about primes in arithmetic progressions looking roughly equivalent with GRH for Dirichlet L-functions.)

One must always be careful when doing arguments of the type (Conjecture A implies Conjecture B); sometimes there is a definite danger of starting to kick big statements back and forth with no gain in knowledge. However, in this case I definitely think some insight has been gained. As Terry mentioned, there is definitely strong evidence towards the Elliott-Halberstam conjecture, and if one simply gave the statement to someone not au courant, there is little chance he/she could immediately see the connection with the Twin Prime or similar conjectures.

(For surveys on this, in addition to the papers, there is an excellent survey by Soundararajan, see http://arxiv.org/abs/math/0605696 ; I have also written a Bourbaki seminar report on this work, but it is in French only).

27 September, 2007 at 1:44 pm

Adam

Getting back to the subject, there is also the interesting looking:

J. Korevaar, Distributional Wiener-Ikehara theorem and twin primes,

Indag. Math. (N.S.) 16 (2005), 37-49.

28 September, 2007 at 3:57 am

Doug

I was reading wikipedia in an attempt to better understand Ergodic theory [ET].

Chaos theory, John von Neumann and Alan Connes seem to be linked?

a- “In mathematics, a measure-preserving transformation T on a probability space is said to be ergodic if the only measurable sets invariant under T have measure 0 or 1. An older term for this property was metrically transitive. Ergodic theory, the study of ergodic transformations, grew out of an attempt to prove the ergodic hypothesis of statistical physics. Much of the early work in what is now called chaos theory was pursued almost entirely by mathematicians, and published under the title of “ergodic theory”, as the term “chaos theory” was not introduced until the last quarter of the 20th century.

“Less formally, ergodic theory is generally about what happens in dynamical systems when they are allowed to run for long periods of time. Markov chains are a particularly simple and common context for applications.”

and

Section: Mean Ergodic Theorem (Von Neumann’s Ergodic Theorem)

http://en.wikipedia.org/wiki/Ergodic_theory

b- Section History: “Some of the theory developed by Alain Connes to handle noncommutative geometry [NCG] at a technical level has roots in older attempts, in particular in ergodic theory.”

http://en.wikipedia.org/wiki/Noncommutative_geometry

Questions:

1 – As degree mapping linked von Neumann Algebras to his Minimax Theorem [MT], is there a mapping linking NCG to ET?

2 – If so, could NCG be linked to MT through John Nash game theory concepts?

– Does commutative correspond to cooperative?

– Does noncommutative correspond to noncooperative?

28 September, 2007 at 4:07 am

Doug

Hi Terence,

Another question:

Is there a mapping relating nilpotent to idempotent?

[I could not “idemmanifold” on google; thus this may not exist].

Physicists seem to prefer nilpotent.

Engineers seem to prefer idempotent.

28 September, 2007 at 8:55 am

Terence Tao

Dear Doug,

Von Neumann worked on a very diverse number of fields in mathematics, including ergodic theory, game theory, and (of course) von Neumann algebras. Game theory is not really connected to the other two, although they both use basic mathematical concepts such as convexity and linearity. Ergodic theory on a space X can be described to some extent using commutative von Neumann algebras such as , whereas non-commutative geometry can be described using non-commutative von Neumann algebras, but other than that, there is not much of a direct connection between the two. Ergodic theory, like chaos theory, is centred around the study of a dynamical system, but they emphasise different aspects of such systems (ergodic theory focuses on long-term average behavior of orbits, while chaos theory focuses on the divergence of nearby orbits and on asymptotic attractors).

, whereas non-commutative geometry can be described using non-commutative von Neumann algebras, but other than that, there is not much of a direct connection between the two. Ergodic theory, like chaos theory, is centred around the study of a dynamical system, but they emphasise different aspects of such systems (ergodic theory focuses on long-term average behavior of orbits, while chaos theory focuses on the divergence of nearby orbits and on asymptotic attractors).

In general, any two fields of mathematics selected at random are likely to share some low-level features in common, because they use the same mathematical language and fundamental concepts, and because they often study different aspects of the same mathematical objects. But this usually does not correspond to any higher-level correspondence between these two fields; those correspondences which have been found (e.g. between arithmetic geometry and algebraic geometry) tend to be rather deep and not easily discernable from looking at the low-level “keywords” of the respective fields.

28 September, 2007 at 9:44 am

Adam

Doug’s intuitive questions remind me of the work on the gradient

flow of the nonlocal van der Waals field functional. A traveling wave

was constructed with degree theory (a homotopy version), however,

the critical droplet (a threshold between the domains of attraction

of two stable states) was obtained (1999) with a careful

mountain-pass construction (literature). The min-max concept

seems to be quite deep, as to this date only partial reconstructions

of that homoclinic have been obtained. It is an open question

whether a min-max approach is unique for that problem, which

also immediately gives a soliton solution of the nonlocal KdV equation.

28 September, 2007 at 10:36 am

Adam

Dear Doug: The problem with giBbs postulates is that it leads

to a definition of a phase transition which is incompatible with

the experimental one (i.e., two distinct densities coexisting

at the same pressure, if you look at the liquid-gas isotherms).

Maxwell’s equal area rule correction of the van der Waals

equation of state is a great second step in establishing a phase

transition, but it doesn’t eliminate the values of the density in

the interior of the interval. For that, you need the additional

van der Waals postulate of pseudoassociations of complexes

of molecules (literature).

28 September, 2007 at 11:29 am

teaspoon

Terry, maybe people should have to answer a challenge question before they post?

Something like:

Please answer YES or NO: You have a theory that reconciles quantum gravity and special relativity and assumes only the Riemann Hypothesis.

28 September, 2007 at 12:11 pm

Adam

There is something fishy about the Riemann Hypothesis, due to

“near counterexamples” near Gram points. The author H. M. Edwards

(Riemann’s Zeta Function, Dover Publications 1974) thinks that if

Riemann knew about this phenomenon, he wouldn’t state this

conjecture. Conrey thinks any possible proof would have to be

of a combinatorial nature. However, such hard problems may

be beyond the technical capabilities of the human brain, much

like it is impossible to win with a desktop computer anymore.

There are plenty of other (entry level) challenging problems for

the foreseable future.

28 September, 2007 at 4:46 pm

Terence Tao

Just a reminder: please keep discussions relevant to the topic at hand; in particular, physics discussions should probably be held in some other venue than this blog. [Incidentally, regarding the interactions between physics and number theory: physical intuition has proven to be quite useful in making accurate predictions about many mathematical objects, such as the distribution of zeroes of the Riemann zeta function, but has been significantly less useful in generating rigorous proofs of these predictions. In number theory, our ability to make accurate predictions on anything relating to the primes (or related objects) is now remarkably good, but our ability to actually prove these predictions rigorously lags behind quite significantly. So I doubt that the key to further rigorous progress on these problems lies with physics.]

28 September, 2007 at 6:21 pm

x

“the key to further rigorous progress on these problems lies with physics.”I can’t agree with you.Could you explain it detailly?

28 September, 2007 at 6:27 pm

Terence Tao

I doubt that the key to further rigorous progress in analytic number theory lies with physics.

29 September, 2007 at 9:28 am

Ratner’s theorems « What’s new

[…] Marina Ratner, Oppenheim conjecture, orbits, Ratner’s theorem, unipotent flows While working on my recent paper with Ben Green, I was introduced to the beautiful theorems of Marina Ratner on unipotent flows on homogeneous […]

29 September, 2007 at 10:18 am

Terence Tao

Regarding an earlier question: there is not much of a link between nilpotents (such as matrices A with for some m) and idempotents (such as matrices A with

for some m) and idempotents (such as matrices A with  ). For instance, in the ring of diagonal matrices, there are several non-trivial idempotents but no non-trivial nilpotents; conversely, in the ring of strictly upper-triangular matrices, all matrices are nilpotent but none are idempotent (indeed, it is clear that a non-zero nilpotent cannot be idempotent).

). For instance, in the ring of diagonal matrices, there are several non-trivial idempotents but no non-trivial nilpotents; conversely, in the ring of strictly upper-triangular matrices, all matrices are nilpotent but none are idempotent (indeed, it is clear that a non-zero nilpotent cannot be idempotent).

There is however a strong link between nilpotents and unipotents; by definition, a matrix A is unipotent if and only if A-I is nilpotent (and there are similar definitions for more abstract algebras).

1 October, 2007 at 2:04 am

Daniel

Dear Terry,

It may not be much of a revelation, but some rigorous attempts

at proving physically intuitive directions for the distribution of zeroes

are described in:

Percy Deift, Integrable systems and combinatorial theory, Notices

of the AMS (2000), 631-640,

where he builds on the computation of the limiting statistics of e-values

of a randon GUE matrix in terms of solutions of the Painleve II equation

in:

C. A. Tracy and H. Widom, Level-spacing distributions and the Airy

kernel, Comm. Math. Phys. 159 (1994), 151-174.

There was one more down-to-earth esthetic result, but I can’t kind

recall it at the moment.

1 October, 2007 at 7:49 am

Alfonso Martinez

Dear Terry,

after reading your post, I wondered whether one could possibly establish a connection between your quantitative results and the behaviour of a sum of independent random variables. In this case, and as $n\to \infty$, there is also a dichotomy between variables which converge to a lattice and continuous random variables. The proof also seems to make heavy use of Fourier analysis. For these extreme cases, it is also known that saddlepoint approximations to the sum are very accurate, even for low values of $n$.

But then, one can construct random variables with “peaky” densities, say a train of Dirac deltas placed at a lattice convolved by a Gaussian density with small variance, much smaller than the lattice spacing. As one adds many random variables, one moves from a lattice-like density towards a continuous density. Using a saddlepoint approximation would require switching from one formula (the lattice-like approximation) to another (the continuous random variable case).

It may be a silly or obvious question, but do you know if there are any quantitative results valid for low values of $n$ which would automatically include the “switching” between the two extreme cases? Could your analysis be applied to this case?

1 October, 2007 at 9:01 am

Terence Tao

Dear Daniel,

There has indeed been a remarkable amount of progress in understanding eigenvalues of random matrices or random operators. But the trouble is that in number theory, objects such as the primes or the zeta function are not random; they are conjectured to be pseudorandom in a number of senses, which is why we can confidently make several predictions about their statistics, but they are still, ultimately, deterministic objects. The fundamental problem, in my opinion, is to develop tools that can rigorously establish various types of pseudorandomness properties for these number-theoretic objects; once we can establish sufficiently high levels of pseudorandomness, we should be able to rigorously show that predictions from, say, random matrix theory, are in fact accurate. Intuition from physics has helped us understand the consequences of randomness and pseudorandomness quite a bit, but has not really given us any means to rigorously establish pseudorandomness (cf. “Maxwell’s demon”, which could theoretically create extremely improbable situations which violate statistical physical reasoning, such as the second law of thermodynamics, and thus prevents such reasoning from being completely rigorous). Here it seems to me that insights from arithmetic geometry, harmonic analysis, ergodic theory and dynamical systems, combinatorics, and possibly even complexity theory, will be important.

Dear Alfonso: It seems to me that the situation you describe is more a problem in harmonic analysis and high-dimensional geometry than in ergodic theory or group theory; in particular, there does not seem to be a dynamical system present in your setting (except possibly in the asymptotic limit , in which case a Bernoulli shift system begins to make an appearance). Your problem seems to be related to that of understanding the large Fourier coefficients of a probability measure on the real line, which is a question which has been intensively studied in harmonic analysis and also additive combinatorics, but is probably not directly related to my work with Ben (other than through use of common tools such as the Fourier transform, or by touching on common themes such as the dichotomy between structure and randomness).

, in which case a Bernoulli shift system begins to make an appearance). Your problem seems to be related to that of understanding the large Fourier coefficients of a probability measure on the real line, which is a question which has been intensively studied in harmonic analysis and also additive combinatorics, but is probably not directly related to my work with Ben (other than through use of common tools such as the Fourier transform, or by touching on common themes such as the dichotomy between structure and randomness).

1 October, 2007 at 10:31 am

Emmanuel Kowalski

If we look at the pseudo-randomness related to random matrix models for values of the zeta function (and more general L-functions), we are currently in a very frustrating situation: the first tool we would like to use to prove rigorously some of the consequences of pseudo-randomness is the Riemann Hypothesis (and its generalizations) which provide cancellation in various sums that parallel what one would expect from analogue random variables. However, GRH is precisely what we are trying to understand and hope to prove!

In a few basic (and important) cases, the current knowledge of zeros of L-functions (and other related things) is sufficient to confirm the random matrix models, and to build great confidence in it (analogues over finite fields are also helpful in that respect), but the limits of what we can do are clearly understood.

(I like to think, to avoid being depressed, that this state of affairs is similar to the beginning of the Langlands Program: lots of intuitions, a very coherent picture that emerges, quite a few impressive special cases, but a great fundamental mystery still unresolved… and quite possibly the Weil Conjectures looked the same around the time they were formulated).

1 October, 2007 at 10:57 am

Terence Tao

Dear Emmanuel: In the spirit of “not getting depressed”, we can at least hope to rigorously derive strong versions of pseudorandomness from weak ones (e.g. it is conceivable that the GUE spacing of zeta could be deduced from something which looks weaker, such as GRH and pair correlations, especially if random matrix theory develops to the point where GUE statistics can be deduced from relatively weak pseudorandomness hypotheses). The Gowers inverse conjecture GI(s) is in this spirit, asserting that in order to obtain pseudorandomness in the sense of statistics of linear patterns, it suffices to establish the weaker pseudorandomness of not being correlated with nilsequences, which is a more feasible task when it comes to the primes (or proxies for the primes, such as the Mobius function) thanks to tools such as Vinogradov’s method. (I hope to discuss these issues in some subsequent posts.)

Vinogradov’s method, by the way, ultimately relies on the (additive) randomness extractor properties of integer multiplication, which is one of the few devices I know of with which one can generate rigorously pseudorandom outputs from inputs which are not known to be pseudorandom. It could be that a better understanding of this phenomenon could lead to more progress.

1 October, 2007 at 11:56 am

Emmanuel Kowalski

Yes, I definitely shouldn’t have written “the limits of what we can do are clearly understood”; it’s only what is done the way it has been done historically which seems clearly limited. New insights can come and show that previous expectations were wrong, as certainly is happening for patterns among primes.

7 October, 2007 at 4:06 pm

Doug

Hi Terence,

Speculation:

From the perspective of Bifurcation Theory and tori, is it possible to unify the work of Connes, Yau, Witten, Borcherds and Tao?

In your paper with Ben Green is this phrase “… let us briefly review what happens for higher-dimensional torii”.

It seems possible that this may relate to the Monster, proved by Richard Borcherds?

In Scientific American Magazine, November 1998, Profile: Monstrous Moonshine is True by Gibbs, the symmetry of the monster is described as a ”folded doughnut”.

This may be equivalent to toroidal symmetry?

Edward Witten recently published Three-dimensional gravity revisited [arXiv:0706.3359] apparently embracing the Monster.

There may be a way of transforming 11-D M-theory to conform with the monster description of #-complex-D, string-[or coupling]-D, time-D where #=24 for monster and #=3 nested within 3 for M-theory, particularly if there should be 8 nestings of 3 in the monster?

The Calabi-[Shing-Tung]Yau manifolds may be complex tori?

The description of these quantum manifolds may suggest a likeness to celestial bodies such as the Earth and Sun when their respective magnetospheres are included?

There may be a tenuous relation between your work with ergodic theory and that of Alain Connes with noncommutative geometry through the perspective of Chaos Theory.

7 October, 2007 at 8:42 pm

teaspoon

I think Doug has missed the fact that Publius Terentius Afer was likely born around 185 BC, the same year that then Indian emperor Brhadrata passed away, and about 2000 years before the Indiana mathematician Ramanujan was born. Since we all known the relevance of Ramanujan’s modular function to the identities satisfied by Fermion strings and also the prominent role that reincarnation plays in Hinduism, is it possible that Professor Tao’s Roman playwright pre-incarnate foretold the future coming of M-theory, 2000 years before Witten?

9 March, 2008 at 8:35 pm

254A, Lecture 16: A Ratner-type theorem for nilmanifolds « What’s new

[…] series (which is the “minimal” filtration available to a group G); see for instance my paper with Ben Green on this […]

14 June, 2008 at 1:19 pm

The van der Corput’s trick, and equidistribution on nilmanifolds « What’s new

[…] with Ben Green on the quantitative behaviour of polynomial sequences in nilmanifolds, which I have blogged about previously. During my talk (and inspired by the immediately preceding talk of Vitaly Bergelson), I stated […]

30 June, 2008 at 9:50 am

Anonymous

This is just spectator curiosity but are the results of the recent preprint http://arxiv.org/PS_cache/arxiv/pdf/0806/0806.4535v1.pdf a strong enough to substitute for the Gowers inverse conjecture to establish the nondegenerate case of Dickson’s conjecture, or is more needed?

30 June, 2008 at 10:03 am

Terence Tao

Dear anonymous,

Well, firstly the results you mention (and in the parent post) are for the finite field case rather than the integer case, but they would in principle be applicable to the finite field version of Dickson’s conjecture (though I don’t think anyone has worked this out fully in all details). The more serious issue is that these results are restricted to polynomials of reasonably bounded degree. For applications to Dickson’s conjecture, one needs to apply the inverse conjecture to functions that are something like the Mobius function (or some other similarly number-theoretic object). This function is not expected to behave polynomially (indeed, this is basically the content of the Mobius-nilsequences conjecture, which is another ingredient in the program to establish the non-degenerate case of Dickson’s conjecture). [Incidentally, I’ve recently been informed that our nomenclature for this conjecture is not too accurate; “generalised Hardy-Littlewood prime tuples conjecture” would be somewhat more appropriate, if wordier, than “Dickson’s conjecture”.]

On the other hand, what these results do imply is that a “weak” form of the Gowers inverse conjecture for finite fields (in which correlation is established with a high-degree polynomial rather than a polynomial of the correct degree) will automatically imply the strong form of the conjecture.

7 July, 2008 at 12:59 pm

anonymous

What would the statment of the finite field Dickson’s / “the generalised Hardy-Littlewood prime tuples conjecture” look like? At least to me, there doesn’t seem to be a way to form an obvious analog. Maybe I’m thinking about this incorrectly, but I think of these conjectures as vast generalizations of the PNT — but I’ve never seen a result called the finite field PNT?

7 July, 2008 at 7:30 pm

Terence Tao

Dear anonymous,

The finite field PNT would be the assertion that the number of monic irreducible polynomials (the analogue of primes) of degree at most n in a polynomial ring F[T] over a finite field F is equal to (1 + o(1)) |F|^n / n. Actually this is quite easy to prove compared to the PNT for integers (see e.g. my post on dyadic models).

The analogue of Dickson’s conjecture would then be an assertion about the number of polynomials in F[T] of degree at most n such that the linear forms

in F[T] of degree at most n such that the linear forms  are simultaneously monic irreducible polynomials for

are simultaneously monic irreducible polynomials for  , where

, where  are fixed polynomials. This number should be infinite provided that there are no local obstructions, and one can also predict an asymptotic for this number by the usual heuristics, in exact analogy with the integer case (for instance, one could adapt the discussion in the introduction of my linear equations in primes paper with Ben).

are fixed polynomials. This number should be infinite provided that there are no local obstructions, and one can also predict an asymptotic for this number by the usual heuristics, in exact analogy with the integer case (for instance, one could adapt the discussion in the introduction of my linear equations in primes paper with Ben).

20 July, 2008 at 11:36 am

The Mobius function is strongly orthogonal to nilsequences « What’s new

[…] quantitative behaviour of polynomial orbits on nilmanifolds“, which I talked about in this post. In this paper, we apply our previous results on quantitative equidistribution polynomial orbits […]

20 November, 2008 at 5:24 pm

Marker lectures II, “Linear equations in primes” « What’s new

[…] enough for our purposes, and we had to develop a quantitative analogue of this theory; see these blog posts for more discussion. Anyway, it all works, and gives asymptotics for progressions of […]

3 December, 2009 at 10:34 am

An inverse theorem for the Gowers U^4 norm « What’s new

[…] using Ratner’ s theorem (or more precisely, a quantitative version of this theorem that Ben and I worked out a few years ago) by a certain subgroup H of the product of three Heisenberg groups (times an abelian group to take […]

12 February, 2010 at 10:46 pm

An arithmetic regularity lemma, an associated counting lemma, and applications « What’s new

[…] The regularity lemma is a manifestation of the “dichotomy between structure and randomness”, as discussed for instance in my ICM article or FOCS article. In the degree case , this result is essentially due to Green. It is powered by the inverse conjecture for the Gowers norms, which we and Tamar Ziegler have recently established (paper to be forthcoming shortly; the case of our argument is discussed here). The counting lemma is established through the quantitative equidistribution theory of nilmanifolds, which Ben and I set out in this paper. […]

29 May, 2010 at 2:16 pm

254B, Notes 6: The inverse conjecture for the Gowers norm II. The integer case « What’s new

[…] and also follows from the above van der Corput lemma and some tedious additional computations; see this paper of Green and myself for details. For linear orbits, this result was established by Parry and by Leon Green. Using this […]

22 July, 2015 at 11:49 am

An inverse theorem for the continuous Gowers uniformity norm | What's new

[…] theorem, together with an equidistribution theorem for nilsequences that Ben and I worked out in a separate paper, implies a continuous […]

6 August, 2015 at 10:55 am

Equidistribution for multidimensional polynomial phases | What's new

[…] nilsequence, and in which one does not necessarily assume the exponential sum is small, is given in this paper of Ben Green and myself, but it involves far more notation to even state […]

28 April, 2017 at 1:24 pm

Notes on nilcharacters and their symbols | What's new

[…] assume . Write . Now we appeal to the factorisation theorem for nilsequences (see Corollary 1.12 of this paper of myself and Ben Green to […]