Joni Teräväinen and I have just uploaded to the arXiv our preprint “The Hardy–Littlewood–Chowla conjecture in the presence of a Siegel zero“. This paper is a development of the theme that certain conjectures in analytic number theory become easier if one makes the hypothesis that Siegel zeroes exist; this places one in a presumably “illusory” universe, since the widely believed Generalised Riemann Hypothesis (GRH) precludes the existence of such zeroes, yet this illusory universe seems remarkably self-consistent and notoriously impossible to eliminate from one’s analysis.

For the purposes of this paper, a Siegel zero is a zero of a Dirichlet

-function

corresponding to a primitive quadratic character

of some conductor

, which is close to

in the sense that

One of the early influential results in this area was the following result of Heath-Brown, which I previously blogged about here:

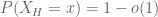

Theorem 1 (Hardy-Littlewood assuming Siegel zero) Letbe a fixed natural number. Suppose one has a Siegel zero

associated to some conductor

. Then we have

for all

, where

is the von Mangoldt function and

is the singular series

In particular, Heath-Brown showed that if there are infinitely many Siegel zeroes, then there are also infinitely many twin primes, with the correct asymptotic predicted by the Hardy-Littlewood prime tuple conjecture at infinitely many scales.

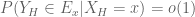

Very recently, Chinis established an analogous result for the Chowla conjecture (building upon earlier work of Germán and Katai):

Theorem 2 (Chowla assuming Siegel zero) Letbe distinct fixed natural numbers. Suppose one has a Siegel zero

associated to some conductor

. Then one has

in the range

, where

is the Liouville function.

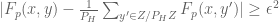

In our paper we unify these results and also improve the quantitative estimates and range of :

Theorem 3 (Hardy-Littlewood-Chowla assuming Siegel zero) Letbe distinct fixed natural numbers with

. Suppose one has a Siegel zero

associated to some conductor

. Then one has

for

for any fixed

.

Our argument proceeds by a series of steps in which we replace and

by more complicated looking, but also more tractable, approximations, until the correlation is one that can be computed in a tedious but straightforward fashion by known techniques. More precisely, the steps are as follows:

- (i) Replace the Liouville function

with an approximant

, which is a completely multiplicative function that agrees with

at small primes and agrees with

at large primes.

- (ii) Replace the von Mangoldt function

with an approximant

, which is the Dirichlet convolution

multiplied by a Selberg sieve weight

to essentially restrict that convolution to almost primes.

- (iii) Replace

with a more complicated truncation

which has the structure of a “Type I sum”, and which agrees with

on numbers that have a “typical” factorization.

- (iv) Replace the approximant

with a more complicated approximant

which has the structure of a “Type I sum”.

- (v) Now that all terms in the correlation have been replaced with tractable Type I sums, use standard Euler product calculations and Fourier analysis, similar in spirit to the proof of the pseudorandomness of the Selberg sieve majorant for the primes in this paper of Ben Green and myself, to evaluate the correlation to high accuracy.

Steps (i), (ii) proceed mainly through estimates such as (1) and standard sieve theory bounds. Step (iii) is based primarily on estimates on the number of smooth numbers of a certain size.

The restriction in our main theorem is needed only to execute step (iv) of this step. Roughly speaking, the Siegel approximant

to

is a twisted, sieved version of the divisor function

, and the types of correlation one is faced with at the start of step (iv) are a more complicated version of the divisor correlation sum

Step (v) is a tedious but straightforward sieve theoretic computation, similar in many ways to the correlation estimates of Goldston and Yildirim used in their work on small gaps between primes (as discussed for instance here), and then also used by Ben Green and myself to locate arithmetic progressions in primes.

59 comments

Comments feed for this article

15 September, 2021 at 11:17 am

asahay22

It looks like you missed replacing a \cite with a link in the description of Step (v).

[Corrected, thanks – T.]

15 September, 2021 at 11:22 am

gexahedron

If both of the worlds, one where generalized Riemann hypothesis is true, and one where Siegel zeros exist, then maybe they are both correct (“real”)?

15 September, 2021 at 11:57 am

Anonymous

The mere existence of a Siegel zero seems helpful for finding “good approximants”, but is it really necessary ?

15 September, 2021 at 12:11 pm

Michele

The post and the various references of Prof. Tao concerning this topic are very interesting. With regard the Hardy-Littlewood Conjecture, it is fundamental in several areas of mathematics, mainly in Number Theory. But there are also various applications in some sectors of Theoretical Physics.

https://www.academia.edu/45582699/

https://www.academia.edu/45586191/

.

16 September, 2021 at 12:08 am

Matc

Is there a very hand-waving intuition as to why {\lambda} pretending to be like the exceptional character {\chi} helps here? Is there some line of reasoning like “{\lambda} behaves like {\chi} and {\chi} is periodic, so {\lambda} is “periodic” in some vague sense and this prevents some cancellation and thus gives the main term”?

16 September, 2021 at 2:18 am

Anonymous

This is sort of right. The Liouville function is difficult to understand because it is related to the zeros of the zeta function, and we don’t have good unconditional results on zeta zeros. A Siegel zero forces the associated quadratic character to nearly behave like the Liouville function, so one can replace Liouville by a Dirichlet character. As you noted, Dirichlet characters are very nice because they are periodic and you can do Fourier analysis and all sorts of useful things with them.

17 September, 2021 at 8:04 am

Terence Tao

One standard way is to start with the Dirichlet series identity

(valid for ). If

). If  has a zero

has a zero  near

near  , this identity suggests that the sum

, this identity suggests that the sum  will be large for

will be large for  near

near  , and hence there is not much cancellation in this sum. As such,

, and hence there is not much cancellation in this sum. As such,  should be biased to having the same sign as

should be biased to having the same sign as  fairly often (i.e., it “pretends” to be like

fairly often (i.e., it “pretends” to be like  ), which is a phenomenon one can quantify using the concept of “pretentiousness” in “pretentious number theory”.

), which is a phenomenon one can quantify using the concept of “pretentiousness” in “pretentious number theory”.

11 October, 2021 at 3:57 am

Harry

This may lead to a new theory.

16 September, 2021 at 12:31 am

Michael

The definition of the singular series with the gothic letter, the slash below the second product missed the divide sign

[Corrected, thanks – T]

16 September, 2021 at 1:40 am

Revantha

Why is it surprising that we can derive interesting results after assuming the existence of Siegel zeroes (since it is widely believed that there are no Siegel zeroes) ?

Is the point not about any particular result, but rather that various not-especially-related results are derivable from the same assumption ?

17 September, 2021 at 8:19 am

Terence Tao

Well, there are a couple reasons:

1. By exploring this presumably illusory “Siegel zero universe” more extensively, we may eventually be able to hit upon a contradiction, and thus finally resolve the notorious problem of whether Siegel zeroes exist. This could in turn lead to substantial progress more generally towards GRH. (One should caution though that historically every such discovery of an apparent contradiction has fallen apart under more careful scrutiny; the Siegel zero universe seems highly resistant to being destroyed!)

2. There are several results in analytic number theory (e.g., Linnik’s theorem) that are proven unconditionally by splitting into two cases, one where Siegel zereos exist, and one where they don’t, and pursuing a separate argument to handle each case. So this sort of result could end up being one half of a later unconditional result. The non-Siegel-zero case usually comes with effective bounds, so it is of particular interest to see if the Siegel zero case also has effective estimates, as this would lead to effective unconditional estimates.

3. Having a good working understanding of the Siegel zero “alternate universe” helps one detect errors (or at least raise serious red flags) in claimed results in analytic number theory that are inconsistent with that universe, such as results that breeze through various “parity barriers” without demonstrating any awareness at all of the potential existence of Siegel zeroes. Sometimes one can narrow down where the specific error occurs in a manuscript by testing individual assertions in that manuscript under the hypothesis of a Siegel zero to find out the first place where an inconsistency occurs. In a similar spirit, the Siegel zero universe places barriers to improving the constants in various bounds (e.g., the constants in the large sieve inequality) and allows one to assert that certain results are “optimal at the current level of technology” by demonstrating that any further improvement in the bounds would be incompatible with the existence of Siegel zeroes.

4. In some cases, such as in our paper, the Siegel zero universe is *more* computationally tractable than the GRH universe that one expects to be the real one; GRH does not let us prove either the Hardy-Littlewood or Chowla conjectures at our current level of technology! This allows us to develop calculations to a level of precision that is not available on GRH, which can help develop technology that will one day also be useful for applications outside the Siegel zero universe. For instance, the techniques in this paper seem to be adaptable to allow for relatively straightforward calculations for the predicted asymptotics for divisor correlations such as , even if we are still missing the technology to rigorously establish these correlations in most cases (right now we can basically only handle the cases when

, even if we are still missing the technology to rigorously establish these correlations in most cases (right now we can basically only handle the cases when  , or when

, or when  and

and  ).

).

5. Related to 4., if one can prove a conjecture such as the Hardy-Littlewood or Chowla conjecture in the Siegel zero universe, it provides some heuristic support for this conjecture also being true in the real universe: it shows that such conjectures are provable “in principle”, albeit not in the universe that we want them to be true in. Nevertheless, some of the ideas, calculations, methods, or insights used to resolve the conjecture in the Siegel zero universe might also be of use attacking the same conjecture in the real universe.

6. It may end up that the Siegel zero universe is indicative of some other alternate, exotic form of number theory which is actually self-consistent. An analogy here is with the parallel postulate, which was widely believed to be true in the real world. A systematic exploration of the alternate universe where the parallel postulate failed eventually led to the discovery of self-consistent non-Euclidean geometries. Perhaps there is some sort of nonstandard model of some sort of “generalized number theory” in which Siegel zeroes do exist? (One could argue for instance that elliptic curves of high (analytic) rank sort of count as a weak Siegel zero, in that their associated L-function have a zero on the real axis, though so deep inside the critical strip that their influence is rather weak.)

17 September, 2021 at 2:07 pm

Revantha

Thanks, that clarifies. It is interesting to encounter these persuasive reasons given that the approach is initially unexpected from a logic perspective.

17 September, 2021 at 5:02 pm

Siegelvsgrh

Can we conclude a statement is unconditionally valid if it is valid conditionally on siegel zero and valid conditionally on grh? Is it a possible reason 7?

18 September, 2021 at 8:32 am

Terence Tao

At a heuristic level perhaps, but at a fully rigorous level there is of course a large middle ground in which Dirichlet L-functions have zeroes that are neither close to 1 nor on the critical line. There is a sort of “unified universe” in which one makes instead the assumption that all zeroes of L-functions lie on either the critical line or the real axis, and there are some interesting conclusions to be drawn there; in particular, Sarnak and Zaharescu showed in this case that there are strong limits to the strength of a Siegel zero in this universe.

18 September, 2021 at 2:03 pm

Siegelvsgrh

Essentially the zero free region improvement and error bound are related. So are you saying there is an error bound (above the optimal GRH bound) below which Sigel zeros can be ruled out?

30 September, 2021 at 8:40 pm

duck_master

Random thought: Do you know if it’s possible to use the assumed existence of the Siegel zero of

of  to obtain any nontrivial amount of control on sums like

to obtain any nontrivial amount of control on sums like  (especially, I presume, in the regime

(especially, I presume, in the regime  , where the currently known Siegel-zero-based results operate)? Since we’re assuming the (presumably illusory) existence of a Siegel zero, we may as well make full use of it.

, where the currently known Siegel-zero-based results operate)? Since we’re assuming the (presumably illusory) existence of a Siegel zero, we may as well make full use of it.

Another random thought: Since we expect in some kind of statistical pointwise sense for inputs of size

in some kind of statistical pointwise sense for inputs of size  , not only should we expect “exceptional primes”

, not only should we expect “exceptional primes”  to be rare, but we should also expect them to prefer being at places modulo

to be rare, but we should also expect them to prefer being at places modulo  such that

such that  is closer to -1, rather than at places where the exceptional character gives a value far away from -1.

is closer to -1, rather than at places where the exceptional character gives a value far away from -1.

3 October, 2021 at 4:49 pm

Terence Tao

The divisor functions are not sensitive to zeroes of the zeta function or Dirichlet L-functions, since their Dirichlet series involves zeta functions in the numerator rather than the denominator. So it does not appear that Siegel zeroes are particularly helpful in understanding these functions.

Note that a quadratic character already only takes on two values,

already only takes on two values,  and

and  , and half of the

, and half of the  residue classes will take on the value

residue classes will take on the value  and half will take on the value

and half will take on the value  . The exceptional primes are those in the first category (as well as the small number of primes that divide

. The exceptional primes are those in the first category (as well as the small number of primes that divide  ) and the non-exceptional ones are the second category.

) and the non-exceptional ones are the second category.

16 September, 2021 at 9:17 pm

Lior Silberman

In the statement of Conjecture 1.3 in the paper, I think should be

should be  : any Liouville factors should cause cancellation.

: any Liouville factors should cause cancellation.

[Thanks, this will be fixed in the next revision of the ms. -T]

17 September, 2021 at 12:29 pm

Anonymous

In theorem 3, the estimate is dependent on $latek k$ but not on (also there is a restriction on

(also there is a restriction on  but not on

but not on  ) – this asymmetry between

) – this asymmetry between  and

and  seems incorrect.

seems incorrect.

18 September, 2021 at 8:26 am

Terence Tao

Actually, this asymmetry seems inherent to our current state of understanding; under the assumption of a Siegel zero, the Liouville function behaves like the exceptional character which is relatively easy to control, while the von Mangoldt function behaves like a twisted version

which is relatively easy to control, while the von Mangoldt function behaves like a twisted version  of the divisor function

of the divisor function  which is moderately difficult to control. In particular we can only handle up to

which is moderately difficult to control. In particular we can only handle up to  copies of the latter, but an unlimited number of copies of the former, and our bounds worsen (and hypotheses tighten) as

copies of the latter, but an unlimited number of copies of the former, and our bounds worsen (and hypotheses tighten) as  increases from zero to two.

increases from zero to two.

17 September, 2021 at 2:13 pm

Anonymous

If there is no Siegel zero, is it still possible to use Dobner’s proof of a generalized Newman’s conjecture for a large class of Dirichlet Series (containing all Dirichlet L-functions associated to primitive characters) by using suitable deformations of these L-functions with positive deformation parameter

of these L-functions with positive deformation parameter  – thereby ensuring the existence of a Siegel zero for

– thereby ensuring the existence of a Siegel zero for  – which can be used to find “good approximants” and corresponding estimates as described in this post ?

– which can be used to find “good approximants” and corresponding estimates as described in this post ?

It seems that the deformation parameter should be optimized (as a function of

should be optimized (as a function of  ) to have a “sufficiently good” quality parameter

) to have a “sufficiently good” quality parameter  in a corresponding estimate (analogous to theorem 3).

in a corresponding estimate (analogous to theorem 3).

18 September, 2021 at 8:29 am

Terence Tao

Unfortunately there does not seem to be much interaction between Siegel zeroes and heat flow type deformations of zeta functions (or L-functions). Note for instance that such deformations fail to have an Euler product, so their number-theoretic impact is sharply limited (indeed, in practice, the only way number theory interacts with the deformations is through the connection between number-theoretic objects such as the primes, and the original Riemann zeta function

is through the connection between number-theoretic objects such as the primes, and the original Riemann zeta function  .)

.)

18 September, 2021 at 3:36 am

Anonymous

In theorem 3, it seems that (in the exponent of the lower bound for x) should be

(in the exponent of the lower bound for x) should be  , and the estimate error term should have its implied constant be dependent on

, and the estimate error term should have its implied constant be dependent on  .

.

[Corrected, thanks – T.]

19 September, 2021 at 1:27 am

Quill

Not a relevant question, but do the news and social media affect your productivity these days, and how do you manage your consumption of them? I found it interesting that Timothy Gowers said on his Twitter feed that Twitter is affecting his work, and that he is taking a break from it this month of September. He seems pretty concerned about the climate crisis and also comments on current events and social issues.

19 September, 2021 at 4:56 am

Raphael

What about the ‘other direction’ if it comes to GRH violations, i. e. the assumption of zeros very close to x=1/2? Can we expect anything similar fruitful?

19 September, 2021 at 5:31 am

Anonymous

and what about allowing almost zeros? From the general heuristics it seems the result should be very similar.

19 September, 2021 at 5:48 am

Anonymous

For example an arithmetic progression of Siegel zero like arguments of the L-function converging to zero?

1 October, 2021 at 11:32 am

Alex

Siegel zeros do not exist.

1 October, 2021 at 11:52 am

Anonymous

Is it possible to use these results on the Chowla conjecture (in the presence of a siegel zero) to get new information on the distribution of such zeros?

1 October, 2022 at 7:37 pm

RH over finite fields

Deligne demonstrated RH over finite fields. What would have been the analogous consequences of a siegel type zero in that case? Is there a meta reason why an analog of Siegel zeros are not present in these cases?

3 October, 2022 at 11:04 pm

Terence Tao

This may be a rather unsatisfyingly tautological answer, but the meta reason in the finite field case is that we know how to prove the Riemann hypothesis in that setting, rendering the Siegel zero scenario counterfactual there. If we could similarly prove RH in the number field setting, we would also have finally eliminated Siegel zeroes from that context also; but as dearly as we would love to do so, nobody has successfully managed to achieve this.

13 November, 2022 at 6:52 pm

hezhigang

Hello, Prof. Tao, I’d like to ask a naive question. Yitang Zhang has posted a paper on Landau-Siegel zero. Discrete mean estimates and the Landau-Siegel zero https://arxiv.org/abs/2211.02515. proved that

L(1,\chi)\gg (\log D)^{-2022}

Does it might right and meaningful?

14 November, 2022 at 2:34 am

Anonymous

It seems that the appearance of the exponent 2022 (in the year 2022) is not a pure coincidence !

15 November, 2022 at 7:33 am

valuevar

That’s just a bit of (deliberate) humor. If the paper can be straightened out, i.e., if it is essentially correct (it seems to be a bit of a mess at present), then the constant is very unlikely to be the best that the method can give. Rather, there’s a trade-off at some point – improving constants can mean introducing complications. It’s a bit like the accountants of some extremely rich person (Murdoch’s?) declaring his yearly income being 452452452 euros, or something like that.

15 November, 2022 at 9:03 pm

David Roberts

Zhang claims his methods could get the exponent down to less than 1000, but that he was of course being a bit cute with organising the constants to get 2022.

14 November, 2022 at 8:27 am

Terence Tao

At this point the essential correctness of the manuscript is unconfirmed. There are a number of misprints and technical issues in the paper (mostly centering around Sections 11 and 12) that are hindering the verification process and which I have forwarded to Yitang in order to request clarification. For instance, there are references to non-existent equations (8.25) and (8.26) on pages 64, 65, 67, (10.) on page 67, (15.) on pages 98 and 99, “(4) and (4)” on pages 67 and 69, “(5) and (5)” on page 63, and (14) on page 109; there also appears to be a reference missing completely just before Lemma 12.3 on page 70 (as well as just before the aforementioned reference to (14) on page 109), and the function that appears from page 96 onwards is never defined in the paper. As a consequence, several steps in the manuscript currently have no valid justification provided. It is possible that these (as well as some more serious issues) can be corrected, but it will likely take some time before the process resolves (in particular, I would not want to pressure Yitang to upload a hastily edited revision of the manuscript instead of a carefully proofread one), and so patience is advised.

that appears from page 96 onwards is never defined in the paper. As a consequence, several steps in the manuscript currently have no valid justification provided. It is possible that these (as well as some more serious issues) can be corrected, but it will likely take some time before the process resolves (in particular, I would not want to pressure Yitang to upload a hastily edited revision of the manuscript instead of a carefully proofread one), and so patience is advised.

15 November, 2022 at 5:46 am

Anonymous

“in particular, I would not want to pressure Yitang to upload a hastily edited revision of the manuscript instead of a carefully proofread one.” Very well said. Which math publication might seek for his carefully ‘proofread’ one? Is there ANY ? Or is it too early to tell still?

16 November, 2022 at 1:34 am

Anonymous

In Yitang’s arxiv source, he doesn’t use \ref{}, “(8.25)” is explicitly typed in.

19 November, 2022 at 2:48 am

Yihong

Dear Professor Tao, , where

, where  is the set of ALL good values of

is the set of ALL good values of  ,

, for an individual

for an individual  .

. after the proof of Lemma 3.5, we need the conclusion of Lemma 3.3 to be

after the proof of Lemma 3.5, we need the conclusion of Lemma 3.3 to be

,

, .

.

I would like to ask whether the correctness of “The logarithmically averaged Chowla and Elliott conjectures for two-point correlations” on the arxiv is confirmed.

I cannot resolve the following issue:

As I understand, Markov’s inequality after (3.14) implies that

and not

Thus, to obtain

and not

20 November, 2022 at 2:00 pm

Terence Tao

The bound for

for  implies

implies  from the law of total probability, which in this case is

from the law of total probability, which in this case is

5 November, 2023 at 3:21 am

Anonymous

Is the quantity in the Hoeffding inequality for the set of all

in the Hoeffding inequality for the set of all  such that

such that

sufficient when we go back to the individual

sufficient when we go back to the individual  and to the original logarithmically averaged two-point correlation? Obviously, it would suffice if we had the individual inequalities for the set of all

and to the original logarithmically averaged two-point correlation? Obviously, it would suffice if we had the individual inequalities for the set of all  such that

such that

[I am afraid I do not understand the question. Is there a specific step in the paper that you are requesting clarification on? If so, please provide a precise reference. You may also find the alternate presentation of the argument at https://joelmoreira.wordpress.com/2018/11/04/taos-proof-of-logarithmically-averaged-chowlas-conjecture-for-two-point-correlations/ to be helpful. -T]

21 November, 2022 at 11:41 pm

Yihong

This is unsatisfactory. We need the bound ,

, for an individual

for an individual  . Very frustrating.

. Very frustrating.

and not

22 November, 2022 at 8:39 am

Terence Tao

No; we only need the bound . There was a typo in my previous invocation of the law of total probability; it should be

. There was a typo in my previous invocation of the law of total probability; it should be

noting of course that .

.

8 December, 2022 at 11:41 pm

Yihong

The event in the invocation of the law of total probability cannot depend on the events

in the invocation of the law of total probability cannot depend on the events  of the partition.

of the partition.

26 November, 2022 at 5:46 am

Yihong

The event , with

, with  from Lemma 3.5, is defined correctly if

from Lemma 3.5, is defined correctly if  is restricted to the space

is restricted to the space ![[1, \dots , P_{H}]](https://s0.wp.com/latex.php?latex=%5B1%2C+%5Cdots+%2C+P_%7BH%7D%5D&bg=ffffff&fg=545454&s=0&c=20201002) . If the space of

. If the space of  is larger, the set of all locations of the fixed

is larger, the set of all locations of the fixed  -tuple

-tuple  among the values of

among the values of  is NOT a union of the FULL residue classes mod

is NOT a union of the FULL residue classes mod  .

.

6 December, 2022 at 2:36 am

Anonymous

Is the Hoeffding inequality applicable to when the sum is not over all

when the sum is not over all  but over an unknown subset of

but over an unknown subset of  ?

?

20 January, 2023 at 5:32 am

Anonymous

Do we now understand better whether Prof. Zhang’s technique works?

16 November, 2022 at 1:42 am

Nuevo artículo de Yitang Zhang sobre los ceros de Landau-Siegel - La Ciencia de la Mula Francis

[…] que va a ser un proceso largo. John Baez se hace eco en Twitter de un comentario de Terence Tao en su blog, una de las pocas personas que tendrá que dar el visto bueno a la demostración para que se […]

17 November, 2022 at 10:30 pm

La Fields que nunca fue. – Luis Alexandher

[…] Terry Tao se ha pronunciado al respecto. Alguien en su post que habla sobre el tema de los ceros de Landau-Siegel comentó lo siguiente (los traduje a […]

18 November, 2022 at 9:51 pm

Drugengmen

Dear Professor Tao

I found such a paper on Zeta Function on the Internet. The author uses the method of Laplace inverse transformation to link the zeta function with the real function, draws some conclusions, and claims to have discussed it with Sir Michael F Atiyah.

It seems that I have never seen such a research method and perspective before. How do you evaluate it? Thanks!

The following is the address of the paper:

https://arxiv.org/abs/1807.00849

19 November, 2022 at 4:06 pm

David Roberts

Unfortunately at that time Atiyah himself was proposing major results that were not sound. His approval is not a silver bullet, and should even be treated with caution. In any case, the author’s claim in the abstract that complex functions are difficult to understand because they cannot be graphed seems untrue, since people know a very great deal about complex functions. Mathematicians do much more to study real functions than graph them, and actual visualisations are but one tool, and not generally a conclusive one.

19 November, 2022 at 10:29 pm

Drugengmen

As far as I understand, what the author says that is difficult to draw the graph of complex function, it is only relative to the real function, and it is not absolutely impossible to draw. The zeta function may also be one of the complex functions that are difficult to draw.

As for Atiyah’s proof, since he did not provide a detailed demonstration process, it was indeed impossible to identify it is correct or not.

19 November, 2022 at 11:07 pm

David Roberts

I’m not talking about Atiyah’s proof(s), but his critical faculties later in life. Getting the thumbs-up from Atiyah shortly before his death is not the same as getting his approval in, say, the 1980s.

21 November, 2022 at 1:53 am

Drugengmen

I don’t think what Atiyah said is the key point. The author mentioned it probably just to attract other people’s attention

Do you find any obvious or significant mistakes in this paper? So far, I haven’t found it, but the author’s research perspective and method should be relatively new.

21 November, 2022 at 2:45 am

David Roberts

I don’t know the area, so I can only assess it based on generic criteria, like trying to appeal to authority in the abstract, or saying how hard it is to study things because they cannot be graphed. These are not the sort of things that fill me with confidence. If the paper correctly produces a statement that is equivalent to the RH, then that is perhaps mildly interesting, but there are many many such statements, none of them any easier than RH. The author says in the abstract that the innovation in the paper “greatly simplifies” the problem, which I presume is where a mistake may be hiding.

This is before I open the paper. When I do so I see unexplained notation on the first page that looks like a typo (but which I later find is not), and a claim of the proof of RH. The multi-coloured typsetting and large font claims with multiple exclamation marks are not a good sign. The methods are very elementary, and not the sort of thing one expects will be able to prove the RH, based on my outsider understanding of analytic number theory. Since I don’t know the area, I wouldn’t be spending any time trying to understand where the error surely lies, but this type of paper can be found replicated hundreds of times over (sometimes with the opposite claim!), all of which amount to not much interesting mathematics. So I’m afraid to burst your bubble, but it is more worth investing time in papers that do genuinely new things that are firmly established and verified.

21 November, 2022 at 6:25 pm

Drugengmen

So I understand that you doubt that the main basis of this paper is its writing style rather than its content, is it right?

I don’t know why the author uses different color fonts, or he just wants to highlight what he thinks is important. But I have read some books and articles on number theory, and I wonder whether it is more likely to make objective comments than you?

As far as I have read, no one has ever studied the zeta function with the method of Laplace inverse transformation and drawn the corresponding image. I think this is an important new direction, and I also get some interesting inspiration from that. If professional mathematicians also try to research in this direction, they will get many more important results, I think.

As for the author’s argument, of course, there may be ambiguity or even errors, which requires professional mathematicians to judge. So I would ask Professor Tao or other mathematicians to have a look and comment.

I think whether the tools used in the paper are “elementary” is not the basis for whether the content is correct. As the author’s research direction is different from that of predecessors, the mathematical tools used are naturally different.

22 November, 2022 at 12:48 am

David Roberts

Prof Tao has already written helpful comments that would address (maybe slightly indirectly) your question, so I think it appropriate to point to the addendum at https://terrytao.wordpress.com/career-advice/dont-prematurely-obsess-on-a-single-big-problem-or-big-theory/ or the advice at https://terrytao.wordpress.com/career-advice/be-sceptical-of-your-own-work/

22 November, 2022 at 7:29 am

Drugengmen

Thanks for your answer. Professor Tao’s words are applicable to most situations. In fact, I have advised some people who are addicted to “studying big problems” before. In my opinion, many of their works are obviously wrong or childish.

But there are always exceptions. Now this paper I am referring to, with my mathematical knowledge, I cannot see where its mistakes are. So I hope Professor Tao or other mathematicians can make targeted comments.

Only mathematical reasons can persuade me, not other things.

9 August, 2023 at 5:27 am

Fields Medal Winner Terence Tao Comments on Yitang Zhang's Landau-Siegel Zeros Conjecture Paper - Tech News Comdash

[…] Tao, an Australian mathematician and winner of the Fields Medal, said on November 14 that he had read the recent paper by Yitang Zhang on proving the Landau-Siegel zeros conjecture. Tao commented that the basic accuracy of the paper has not yet been confirmed, and there are some […]